AI-driven 'patient journey' tools mostly aren't.

TL;DR [show]

The 'AI-driven patient journey' category is mostly journey-mapping software with an AI layer bolted on. The AI doesn't change the journey, it changes the dashboard. The two exceptions, and what stops working if the LLM is removed.

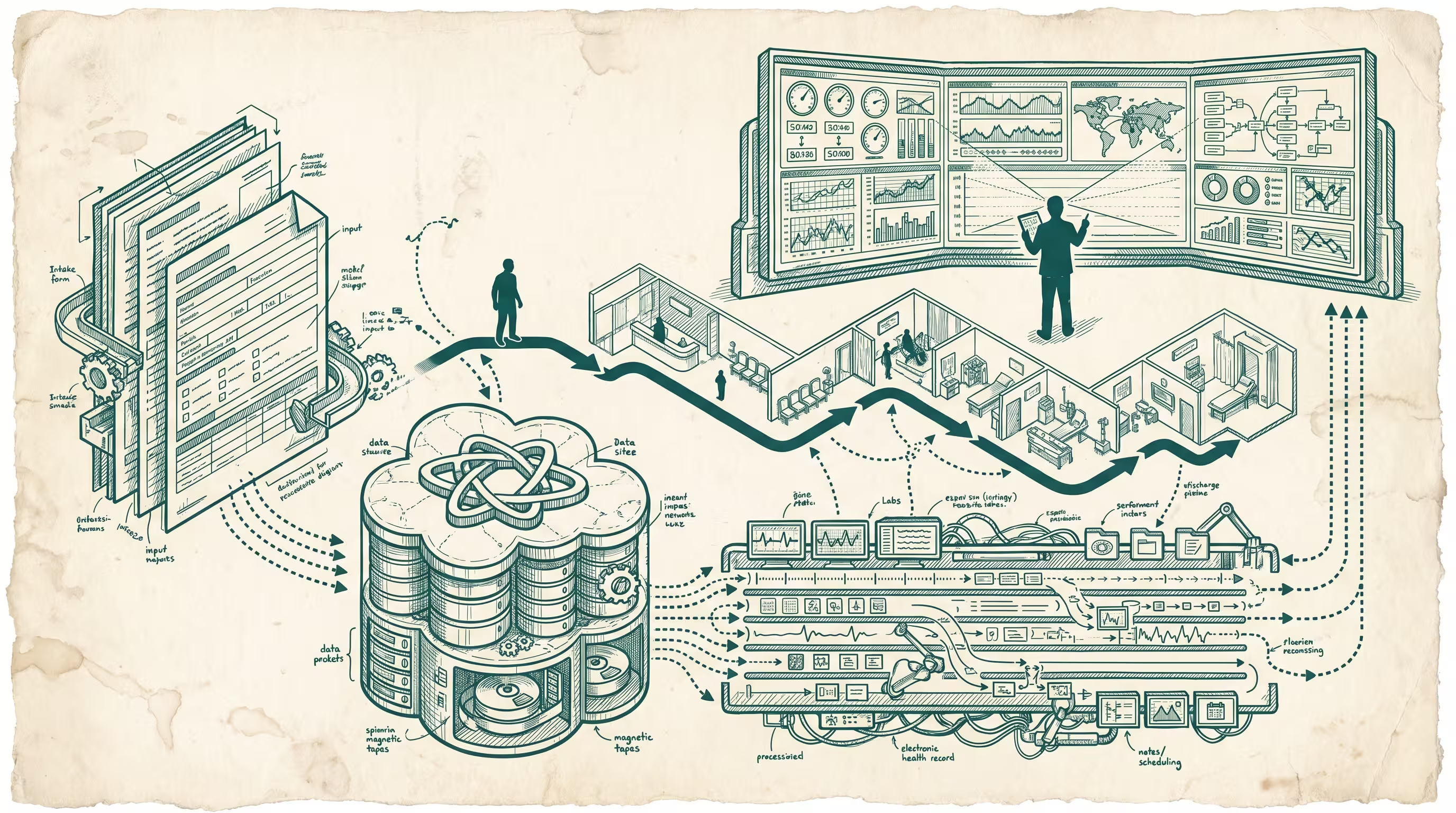

I have spent more time than I will admit looking at the marketing material for "AI-driven patient journey" tools. There is a category emerging here. The category is not what the marketing says it is. The category, in 2023 and most of what I expect to see in 2024, is journey-mapping software with an AI layer bolted on — the AI does not change the journey, it changes the dashboard. The journey was already mapped. The intake form was already a Salesforce object. The appointment was already a Cerner row. What the AI is doing is composing sentences over the top of those rows so that whoever sits in front of the dashboard does not have to write the sentences themselves.

That is not a small thing. Saving an operations director four hours a week of slide-writing is real money on the right cost center. But it is not a journey product. It is a dashboard product with better copy.

The reason this matters: a journey product changes what happens to the patient. A dashboard product changes what happens to the people looking at the patient. These are different categories. They get sold under one phrase. The phrase will hold for another year, maybe two, before someone in procurement asks the question that closes the gap, which is: which one of these tools causes a patient to do something different, and which one causes a hospital employee to do something different.

What the dashboard products actually do

Open up six of the named products in this space and you find the same three jobs, repeated:

First, they ingest claims data, EHR exports, scheduling logs, and sometimes a CRM, and they reconcile patient identifiers across the four. This is the unglamorous half of the work and it is the half that earns the contract. The hospital has been trying to do this reconciliation in-house for ten years. Every vendor that does it well is doing real work.

Second, they compute a small set of derived metrics on top of the reconciled record: time-to-first-contact, no-show probability, readmission risk, follow-up adherence. These are mostly logistic regressions wearing better clothes. The model class is not where the product lives. The product lives in the integration the metric depends on.

Third, they generate a written summary of those metrics for an operations meeting. This is the AI part. This is the part the marketing leads with. It is also the smallest part of the work, by engineering effort, by integration effort, and by what the customer would miss if it disappeared overnight. Take the LLM out of these products and the operations director still has the dashboard, still has the metrics, still has the report. They write the report themselves on a Friday afternoon, the way they did in 2021. The product still works.

That is the test, when you are asked to evaluate one of these things. Ask: what stops working if the LLM is removed. If the answer is "the report writes itself," the AI is a feature. If the answer is "the patient goes somewhere different," the AI is the product. In 2023 and 2024, almost every tool in the named category fails this test.

The two exceptions I have found

The first exception is the small group of tools that sit in front of the patient, not behind them. Symptom intake front-ends, where a patient describes what is wrong in plain language and the model routes to the appropriate triage category. The model is doing work the patient could not do themselves and the front desk did slowly and inconsistently. Buoy Health was the early version of this. K Health has a flavor of it. The reason these are exceptions is that the model is producing a decision that changes where the patient goes next. Take the LLM out and the patient calls a phone tree. Different journey.

Worth being precise about why this is hard. The intake front-end is doing two jobs at once: it is converting unstructured patient speech into a structured triage decision, and it is doing so inside a regulatory envelope where the wrong answer carries liability the dashboard product never has to think about. The marketing-led journey vendors will not enter this category for another two years because the moment they do, they pick up an FDA posture they have been carefully avoiding. The defensibility of the intake-front-end vendors is not the model. It is the eighteen-month head start on regulatory work the dashboard vendors have not started.

The second exception, much smaller and earlier-stage, is the set of tools that re-write the patient's instructions after a visit so the patient can actually follow them. Discharge instructions are notorious. They are written by clinicians, in clinician language, sometimes literally pasted from an EHR template, and the patient misreads them or ignores them. There is a small set of products building on the LLM as a translation layer between the clinical instructions as written and the clinical instructions as the patient actually needs to receive them. The model is producing a different artifact for the patient than the clinician produced. The journey changes. Adherence changes. Readmission rates change in measurable ways in early studies.

These two exceptions share a shape: the AI is producing the artifact the patient acts on. Not the artifact the staff looks at. Not the slide. The thing the patient reads, the thing the patient hears, the thing that determines the next decision. That is the category the marketing language is trying to claim for itself, and that is the category the marketing language has not actually entered.

The third exception, which I am still uncertain about

There is a third category that may be an exception. I am noting it for completeness and because the operator buying these tools in 2024 should know it exists. Post-discharge monitoring layers, where the patient receives daily or weekly check-ins between appointments, the model parses the responses, and a clinician is paged only when the response signals a change worth a human eye. CareSignal, before its acquisition into Lightbeam, was the clearest version of this. There are early-stage vendors building the LLM-augmented version of the same idea, where the check-in adapts to the patient's previous response instead of sending the same six questions every Tuesday.

I am uncertain about this one because the work being done by the model is mostly classification, and classification was a solved problem before LLMs. The reason it might still count is that the adaptive question-generation does change the patient's experience in a measurable way; the patient who was asked a relevant follow-up question answers it, and the patient who was asked the same six questions every week stops answering by week four. The journey changes because the engagement does not collapse. That is the test passing, even if the model class is humbler than the marketing implies. Watch this category. The clarity will arrive when somebody publishes adherence numbers from a study that controls for the question-generation layer specifically.

What I have been wrong about

I have to name a thing I had wrong eighteen months ago, because if I do not, the rest of this piece reads as if I figured this out on the first pass. I did not. In late 2022 I looked at the first generation of these dashboard products and assumed the slide-writing layer was the harbinger and the patient-facing layer would follow within a year, on the same engineering effort and the same team. I was wrong by about a factor of three. The engineering effort to write the slide is two engineers and a product manager for a quarter. The engineering effort to enter the patient-facing category is a clinical-validation team, an FDA consultant, eighteen months of pilot studies, and a separate product organization, because the patient-facing artifact is regulated software in a way the slide is not.

The two are not on the same trajectory and never have been. The dashboard vendors who claimed they would be in the patient-facing category by 2024 were either misreading their own roadmap or not understanding the regulatory work ahead. Both are common. Neither closes the gap. I should have seen this earlier and I am noting it now so that the operator reading this in 2024 does not make the same misread when a vendor's pitch deck shows the patient-facing module on the next slide of the roadmap.

Why the category will sort itself out by 2026

The reason I think the category sorts itself out, and inevitably does, is that hospital procurement is slow but it is not stupid. The dashboard products are being bought right now on the strength of the LLM-shaped story and the cost-savings calculation on the operations-director's-time line item. Both stories are true. Neither story is sticky. The cost-savings calculation is a 2024 budget line that becomes a 2026 commodity line. The LLM-shaped story stops being interesting once the customer realizes the LLM is the cheapest part of the stack and the integration is the expensive part. At which point the customer asks why they are paying journey-product prices for a dashboard, and the dashboard vendors either drop their pricing to match the dashboard category or build the patient-facing layer they are currently calling a roadmap item.

The vendors who built the patient-facing layer first will have a head start that is hard to close. The patient-facing layer is not an AI problem. It is a patient-trust problem, an FDA-posture problem, and a clinical-validation problem, and each of those takes eighteen to twenty-four months of work that does not look like software work. The dashboard vendors will discover this the hard way. The two exceptions above are already eighteen months into it. By the time the dashboard vendors realize what they are actually buying when they decide to build the patient-facing module, the exceptions will have shipped, validated, and locked in the customers who care most about the journey-changing claim.

What an operator should be doing in the meantime

If you are running operations at a health system and you are evaluating these tools, the question to bring into the room is the one I named above: what stops working if the LLM is removed. Run the dashboard products under that test. Run the patient-facing ones under it too. The patient-facing ones will pass; the dashboard ones will fail. That does not mean you should not buy a dashboard product. It means you should buy it as a dashboard product, on dashboard-product economics, and stop paying the AI-journey premium for it. Save the AI-journey budget for a product that actually changes a journey.

If you are building one of these tools, the question is sharper. You can keep selling the dashboard story for another year, maybe two, and the contracts are real. You will also be in an undefended category in 2026, against vendors who spent the same year doing clinical validation on the patient-facing artifact. The category sorts itself, inevitably, into the products that change a journey and the products that change a slide. The slide-changing products are not bad. They are just not what the marketing said they were.

—TJ