The AI-for-prior-authorization pilots are the CMS mandate bet. Here is how to classify them.

TL;DR [show]

The AI-for-prior-authorization pilots running across the major U.S. payers through 2024-2025 are the operational response to the CMS prior-authorization interoperability rule that progressively binds through 2026-2027. The pilots fall into three structurally different patterns, with each pattern having different operational and compounding characteristics. Medium case-study walking the CMS mandate context, the three pilot patterns, what each one tests, and which pattern compounds in ways the others do not.

The CMS prior-authorization interoperability rule, finalized in early 2024 and progressively binding through 2026-2027, requires payers in the Medicare and Medicaid populations to respond to prior-authorization requests on tighter timelines and through machine-readable infrastructure. The major U.S. payers are responding through a set of AI-for-prior-authorization pilots that have been running through 2024-2025, with substantial engineering investment and meaningful operational deployment.

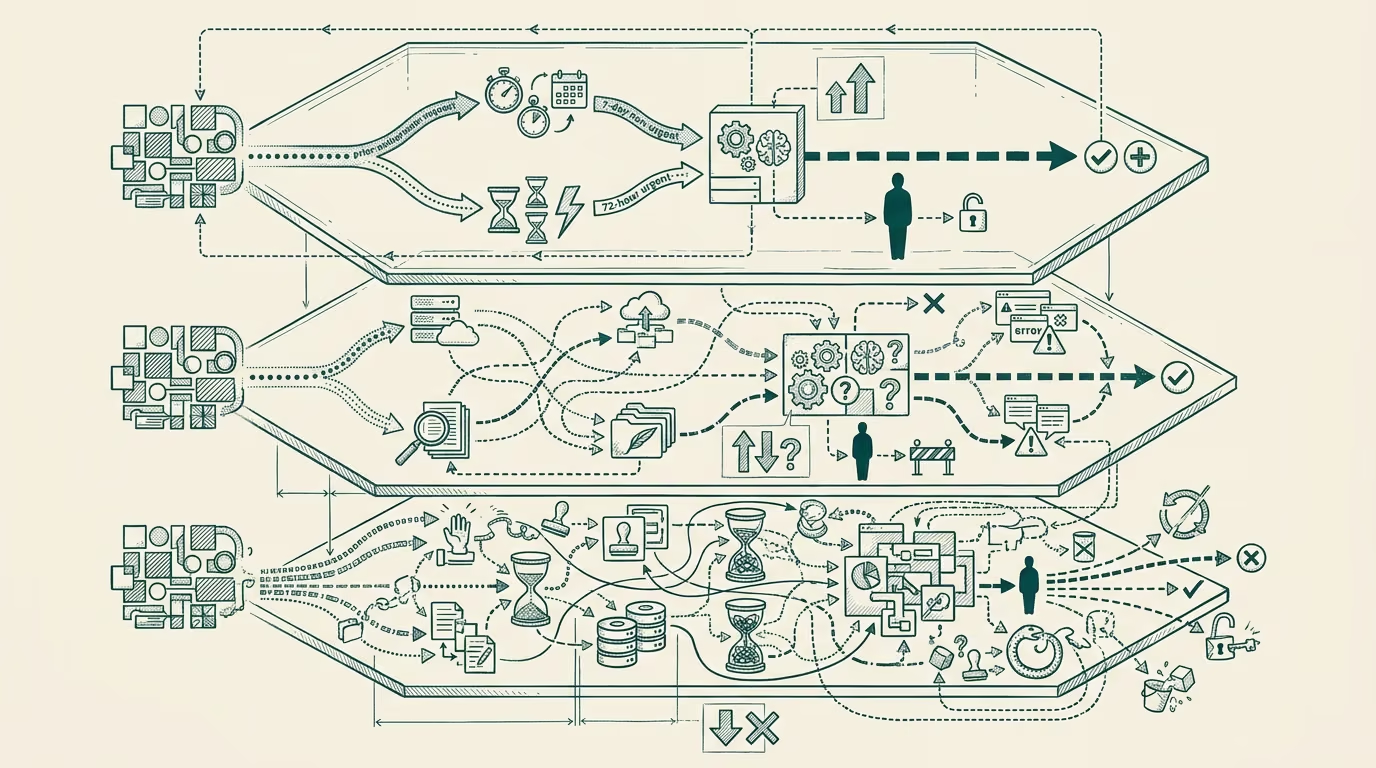

The pilots fall into three structurally different patterns. Each pattern tests a different hypothesis about how AI should integrate into the prior-auth workflow. Each has different operational characteristics, different deployment complexity, and different compounding profiles. This case-study walks the CMS context, the three patterns, and which one compounds in ways the others do not.

The CMS mandate context

The interoperability rule sets specific requirements that payers must meet for prior-auth requests in the Medicare and Medicaid populations. The headline requirements include a 7-day standard for non-urgent requests and a 72-hour standard for urgent requests, plus the API-and-machine-readable infrastructure that supports automated submission, status tracking, and decision delivery. The compliance burden is substantial because the existing manual-review-heavy infrastructure at most major payers cannot meet the volume-at-the-timeline.

The AI-for-prior-authorization deployment is, in operational terms, the central response to the mandate. The pilots running at the major payers through 2024-2025 are the engineering investment that produces the post-mandate operational state. The investment scale is meaningful and the timeline is short, which is why the pilots are visible in the public commentary at the level they are.

The first pattern: rules-engine augmentation

The first pattern uses AI to augment the existing rules-engine-and-decision-tree infrastructure that the payers have been running for prior-auth processing for years. The AI deployment runs alongside the rules engine, scoring incoming requests for likelihood of approval against the established rules, routing the high-confidence-approval cases to automated processing and the ambiguous cases to human review.

The pattern is operationally conservative. It works inside the existing payer infrastructure. It produces measurable improvements in cycle time without requiring substantial workflow redesign. It is the pattern most likely to ship inside the CMS-mandate timeline because it can be deployed against the existing infrastructure without rebuilding the underlying decision-making mechanism.

The pattern is also operationally limited. The AI augmentation is bounded by the quality of the underlying rules engine. If the rules engine produces incorrect-or-outdated decisions in a particular category of request, the AI augmentation will produce the same incorrect decisions faster. The pattern does not address the systematic-quality issues that the prior-auth process has been criticized for; it addresses the timeline-and-throughput issues without addressing the quality.

The payer-class deployment of this pattern through 2024-2025 has been heavy because the operational urgency of meeting the CMS timeline favors the conservative pattern. The pattern's longer-cycle compounding is limited because the underlying rules-engine quality remains the bottleneck.

The second pattern: LLM-based clinical-evidence review

The second pattern uses LLM-based AI to read the clinical-evidence package that supports a prior-auth request (the clinical notes, the imaging, the prior-treatment history) and produce a recommendation against the payer's clinical-criteria for the requested service. The pattern uses the LLM's natural-language processing capability to extract clinical context that the rules-engine pattern cannot extract, with the recommendation being either an approval, a denial-with-explanation, or a flag-for-clinical-review.

The pattern is operationally more ambitious than the first. It requires the payer to have validated clinical-criteria documentation in machine-readable form, the LLM-evaluation infrastructure that produces reliable recommendations, and the clinical-review workflow for the ambiguous cases that the LLM flags. The deployment timeline is substantially longer than the first pattern because the infrastructure requires more engineering work.

The pattern produces meaningful improvements in both cycle time and decision quality, with the latter being the differential against the first pattern. The LLM's ability to read the full clinical-evidence package and produce a contextually-appropriate recommendation can reduce the inappropriate-denials that the manual-review process has been criticized for, while also reducing the inappropriate-approvals that the rules-engine-only pattern can produce.

The deployment of this pattern through 2024-2025 has been concentrated at the payers with substantial in-house clinical-AI engineering capacity (the larger commercial payers, selected Medicare Advantage operators) and at specialty-vendor offerings that integrate with the payer's existing infrastructure. The pattern's longer-cycle compounding is meaningful because the LLM-evaluation infrastructure improves with each deployment cycle.

The third pattern: full-stack platform replacement

The third pattern replaces the payer's existing prior-auth workflow with a new platform-tier offering, with the AI integrated as a foundational element of the platform rather than as augmentation of the existing infrastructure. The pattern is offered by specialty vendors that have built end-to-end prior-auth platforms, with the existing payer-infrastructure serving as a data-source rather than as the operational core.

The pattern is operationally the most ambitious. It requires the payer to migrate the prior-auth workflow off the existing infrastructure, retrain the staff, and integrate the new platform with the broader payer-systems environment. The deployment timeline is the longest of the three patterns, with multi-year migration cycles being typical.

The pattern produces substantial improvements when the migration completes. The platform-tier infrastructure can be designed against the post-mandate operational requirements rather than retrofitted onto pre-mandate infrastructure, which produces operational characteristics the augmentation patterns cannot match.

The deployment of this pattern through 2024-2025 has been smaller than the augmentation patterns because the migration timeline does not align with the CMS mandate timeline. Payers needing to meet the 2026-2027 deadlines have generally chosen the augmentation patterns to ship within the timeline, with the platform-replacement pattern being deployed at payers with longer-cycle strategic horizons or at specialty-vertical operators.

Which pattern compounds

The pattern that compounds in ways the others do not is the second one: LLM-based clinical-evidence review. The reason is that the LLM-evaluation infrastructure improves continuously through deployment, with each evaluation cycle producing data that improves the next deployment cycle. The first pattern's compounding is bounded by the underlying rules-engine quality, which improves slowly. The third pattern's compounding is potentially substantial but is deferred behind the multi-year migration timeline that limits the near-term compounding.

For payers running prior-auth strategy decisions in 2025-2026, the operator-class read is that the augmentation patterns (especially pattern one) are the right deployment for the CMS-mandate timeline, but the LLM-based clinical-evidence-review pattern is the right longer-term strategic investment. Payers that deploy only pattern one for the mandate-compliance work and do not invest in pattern two will face a longer-cycle competitive disadvantage as the LLM-evaluation infrastructure compounds at the payers that did invest.

For founders building AI-for-prior-authorization products, the operator-level advice is to build for the second pattern with strong augmentation-pattern integration as the deployment path. The buyer needs the augmentation-pattern delivery in the near-term to meet the mandate; the long-term compounding is in the LLM-evaluation infrastructure. Vendors who can deliver both, with clear deployment paths from one to the other, are positioned for the durable category-leadership position.

The CMS mandate is the forcing function. The three patterns are the operational response. The second pattern is where the longer-cycle category leadership concentrates. The next 24-36 months will produce visible deployment data on which patterns ship and which compound, and the structural reading the data carefully will be positioned for the post-mandate operational reality the rule is creating.

—TJ