Amodei skipped the deployment timeline.

TL;DR [show]

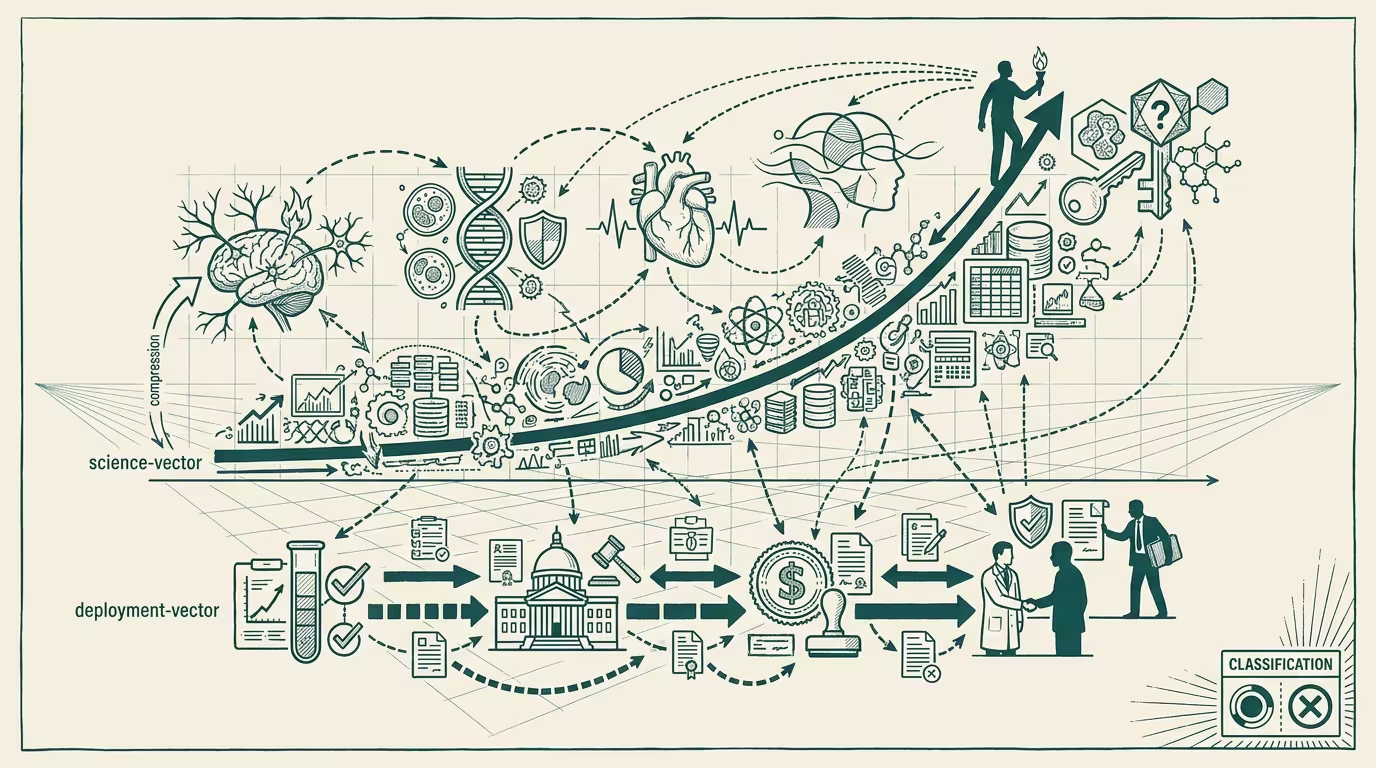

Dario Amodei's 'Machines of Loving Grace' (2024-10-11) predicted AI would compress 50–100 years of biomedical progress into 5–10 years, with parallel arcs in mental health, neuroscience, economic development. Operator read: the manifesto is right about the science vector and silent on the deployment vector — the trial, regulatory, reimbursement, and physician-trust timelines that decide whether the compression reaches a patient. Three places the deployment vector breaks the compression.

Dario Amodei published "Machines of Loving Grace" on October 11, 2024. The biomedical section is the longest and most-detailed. The load-bearing claim: AI would compress 50-100 years of biomedical progress into 5-10 years, with parallel compression arcs in mental health treatment, neuroscience research, economic development across global health categories. The manifesto reads as a serious technical projection, calibrated against specific capability assumptions that Anthropic's Claude family was on track to support.

This piece treats Amodei's argument on its own terms, then engages with the part the manifesto is silent on: the deployment vector. The science can compress at the rate Amodei describes. Whether the compression reaches a patient depends on trial, regulatory, reimbursement, and physician-trust timelines that the manifesto does not engage with.

What Amodei is right about: the science vector

The manifesto's biomedical-compression argument is structured around AI accelerating four research bottlenecks. Hypothesis generation: AI proposes mechanism candidates faster than human researchers, narrowing search space at the front of the discovery funnel. Literature integration: AI synthesizes the existing literature across disciplines, surfacing connections that human researchers miss because of the volume. Experimental design: AI proposes assay designs and statistical approaches that capture more information per experiment. Computational simulation: AI models molecular dynamics, protein folding, cellular interactions at fidelities that approximate the wet-lab outcome with sub-percent error.

Each bottleneck is real. Each is, on the manifesto's framing, accelerable by AI capability that exists or is on track to exist by 2026-2028. The compute requirements scale, the model architectures scale, the training-data availability scales. AlphaFold 3 (2024), the AlphaProteo class of design tools, the Recursion-class molecular-simulation pipelines, the Schrödinger-class computational chemistry stack — all of these are operating-relevant precedents that demonstrate the capability is not speculative. The science vector is, in operating terms, calibrated correctly.

The manifesto's specific compression estimate (50-100 years of progress in 5-10 years) is more aggressive than most operating-class biomedical researchers would project, but the directional claim is defensible. AI is producing a step-change improvement in the discovery-stage productivity of biomedical research, and the improvement is durable.

That is the part the manifesto gets right.

What the manifesto is silent on: the deployment vector

The compression Amodei describes is at the discovery layer. The patient-reaching layer requires every discovery to clear four additional gating constraints, and each constraint operates on its own clock.

Clinical-trial enrollment is the first constraint. _AI does not accelerate trial enrollment._ The patient-recruitment process operates on geographic distribution of eligible patients, on payer-coverage of trial-related care, on the physician-class willingness to refer patients, on patient-class willingness to enroll. None of these are AI-acceleratable in the sense Amodei describes. The current Phase 1-3 enrollment timelines for serious diseases run 18-48 months in aggregate. AI might accelerate trial design, but the actual months-on-the-ground recruiting patients is calibrated to human-process clocks. A discovery accelerated by 10x at the bench is bottlenecked by 1x trial-enrollment timelines downstream.

Regulatory review is the second constraint. The FDA, EMA, and PMDA review timelines are calibrated to human reviewer capacity, statutory review-period mandates, and adversarial-process dynamics with sponsor-class submissions. Recent FDA AI-validation work (the 2025 strategic roadmap) shifts some preclinical-evidence categories toward computational substitutes, but the IND, NDA, and BLA review periods themselves are unchanged. Standard FDA review for a serious-disease therapy runs 10-18 months from filing to decision. A discovery that compresses by 10x at the lab is bottlenecked by 1x at the regulator.

Payer reimbursement is the third constraint. CMS, commercial-payer formulary committees, and pharmacy-benefit-managers run their own multi-month review cycles after FDA approval to decide coverage tier, prior-authorization requirements, and step-therapy positioning. A novel therapy approved by FDA in 2026 might not have CMS coverage decisions until late 2027 and full commercial-payer parity until 2028-2029. The patient who benefits is the patient whose payer covers the therapy. A discovery accelerated through trial and approval is bottlenecked by the payer-decision calendar that Amodei's framing doesn't engage with.

Physician-trust calibration is the fourth constraint. Even when a therapy clears trials, regulatory review, and payer coverage, physicians have to decide to prescribe it. The decision involves trust calibration against the existing-standard-of-care, against the prescriber's own clinical experience, against patient-suitability assessment for the new therapy. The diffusion curve through the prescriber-class is, in healthcare-history terms, slow. The 50% adoption point for a novel therapy in primary-care practice typically arrives 5-8 years post-approval. The compression Amodei describes does not accelerate physician-trust diffusion.

What the operator-class plan does with the deployment vector

Discovery-vector compression and patient-reaching compression are different timelines and have to be modeled separately. Operators building biotech investment theses on Amodei-class compression projections need to be specific about which vector they are modeling. Discovery-vector compression is real and accelerating; patient-reaching compression is the four-gate cumulative timeline and is not accelerating at the same rate. Investment theses that conflate the two are mispricing the deployment-vector risk.

The gating constraints are not equally rigid. Some are addressable through operator-class work. Trial enrollment can be improved through better patient-matching and through AI-assisted recruiting (modestly accelerable, ~20-30% improvement plausible). Regulatory review can be accelerated through agency-collaboration and validated computational-evidence frameworks (some categories accelerable to ~50% timeline reduction). Payer reimbursement is mostly inelastic to operator-level effort. Physician-trust diffusion is, in operating terms, the slowest gate and the least addressable. The operator question is how much of the four-gate cumulative timeline is compressible by operator-level effort. The answer is: roughly 30-50% in aggregate, not 90%+.

The manifesto's silence on the deployment vector is itself the operator signal. Amodei's silence is not accidental. The manifesto is calibrated to a frontier-lab CEO audience. The deployment-vector engagement requires healthcare-operating expertise that is not in the frontier-lab's wheelhouse and is not the manifesto's argument. The silence is the structural gap that operator-grade healthcare-AI plans have to fill in. The plan that takes Amodei's compression seriously and adds the deployment-vector engagement is the plan that captures what the manifesto's argument implies for patient outcomes. The plan that takes Amodei's compression seriously and ignores the deployment vector is the plan that overestimates near-term patient impact by a factor of 5-10x.

What survives all of this

The thing that crosses pillars is that the science-vs-deployment vector asymmetry recurs across every healthcare-AI category. Diagnostic AI: science compression is real, deployment is gated by physician-workflow integration and reimbursement coding. Therapeutic AI: science compression is real, deployment is gated by the four-gate cumulative timeline above. Prevention AI: science compression is real, deployment is gated by payer willingness to invest in prevention vs. treatment. Each category has its own deployment-vector gating, and each has its own operator plan that has to engage with the gating.

What survives the trade-press framing is that "Machines of Loving Grace" is one of the more consequential AI-and-biomedicine vision documents of the 2024-2025 cycle, the science-vector argument is calibrated correctly and the discovery-stage compression is real, the deployment-vector silence is the structural gap that healthcare-AI operators have to fill, and the operator-level discipline is to model the four gates explicitly rather than to default to discovery-stage projections as proxies for patient impact. The biotech investors and operators who model the gates explicitly are the ones whose 2027-2030 plans pencil. The ones who don't are the ones whose plans collapse against the deployment timeline that the manifesto did not engage with.

Amodei skipped the deployment timeline. The science vector is right. The deployment vector is the operator work the manifesto does not do. The compression reaches the patient at the rate the four gates allow, and the gates are calibrated to clocks that AI capability does not touch. Operators who plan against the science vector and skip the deployment vector are planning against half the timeline. The half that decides whether the compression reaches a patient is the half the manifesto leaves to the operator class to figure out.

—TJ