Anthropic open-sourced the plumbing. Your roadmap just got shorter.

TL;DR [show]

Model Context Protocol shipped on 2024-11-25 as an open standard with reference SDKs and pre-built servers for Drive, Slack, GitHub, Postgres. Within twelve months OpenAI and Google adopted; the community shipped thousands of servers. The M×N integration problem is solved at the protocol layer, which means your AI-integration vendor is now an AI-context-curation vendor. Different category, different pricing.

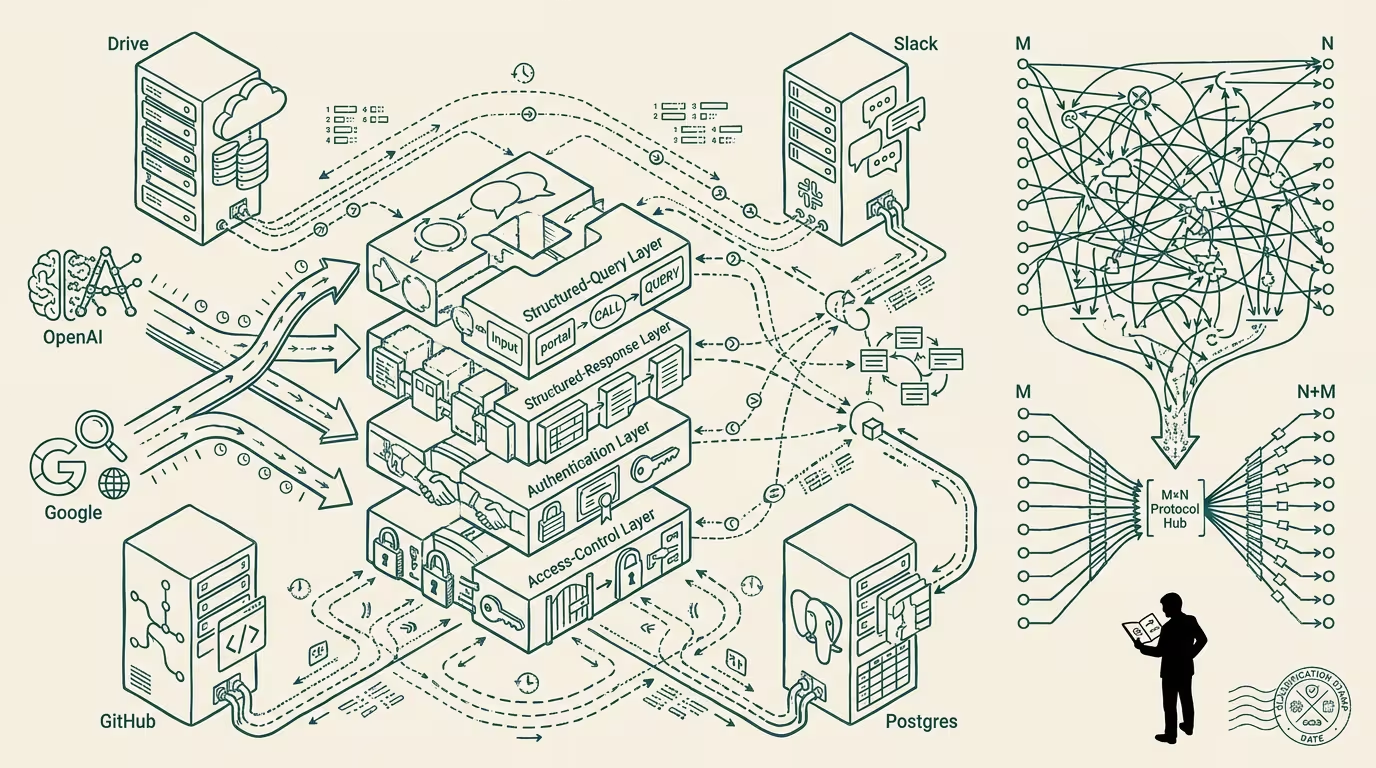

Anthropic shipped Model Context Protocol on November 25, 2024. The release framed it as an open standard for agent-to-tool integration: structured queries from the agent, structured responses from the data source or tool, authentication and access-control at the protocol layer, reference SDKs in Python and TypeScript, pre-built servers for Drive, Slack, GitHub, Postgres, and a long tail of common enterprise data sources.

The release got moderate trade-press coverage. The technical-Twitter community recognized the structural shift inside a week. The enterprise-procurement class will recognize it in 2025-2026 when their integration roadmap suddenly looks shorter.

The shift is the M×N integration problem getting replaced by an M+N integration model.

Before MCP: an AI agent that needs to read from Slack, write to Drive, query Postgres, and call GitHub had to integrate with each one through a custom connector. The vendor selling AI-integration software priced the work as N integrations per agent, M agents per customer, and the total work scaled as M×N. The vendor's revenue scaled with the multiplication. The customer's cost scaled with the multiplication. Every new tool added to the agent's surface multiplied the integration backlog.

After MCP: the agent speaks the protocol. The tool exposes the protocol. The integration is M+N (each agent integrates once with the protocol; each tool exposes once with the protocol), not M×N. The vendor selling AI-integration software has, structurally, the wrong product. The customer paying for the integration backlog has, structurally, just been handed a shorter roadmap.

Within twelve months of MCP shipping, OpenAI and Google had adopted. The community had shipped thousands of MCP servers covering every common enterprise data source and a long tail of specialty ones. The integration substrate had, in practical terms, replatformed.

What flows for the operator-class procurement decision is sharp. Existing AI-integration contracts need to be renegotiated. A vendor selling integration work in 2024 priced against the M×N math. The 2025-2026 renewal has to price against M+N. The savings are real and meaningful — typically 50-70% reduction in integration line items for enterprise AI deployments. The customer who walks into the renewal aware of the protocol shift has leverage. The customer who walks in unaware pays the legacy multiplier.

What flows for the vendor category itself is repositioning. The AI-integration vendor whose business was M×N integration work is, in 2026, in a structurally smaller category. The vendors who reposition fastest move from "we integrate your AI with your tools" to "we curate the context your AI needs from across your tools." The repositioning is from integration-engineering to context-curation. The category that survives is smaller and operates on different unit economics.

What flows for build-vs-buy on agent platforms is a shift toward build. A company that wanted to deploy a custom agent in 2023 had to either buy an integration platform or build M×N connectors. By late 2025 the company can build the agent against MCP servers that already exist for most of its tool surface. The build option becomes meaningfully cheaper relative to buy. The platforms that priced their value on integration-work-saved face a structural headwind.

This is a substrate-shift, not a feature-release. The internet had the same shape in 1994 when HTTP and HTML became the substrate. The cloud era had the same shape in 2010 when AWS APIs became the substrate. The agent era is now in the substrate-stabilization phase. MCP is, in operating terms, the agent-era's substrate. Whoever built integration tooling against the pre-substrate fragmentation has a business that compresses; whoever builds against the substrate has the next platform-tier shape.

Anthropic releasing MCP as open-source is a strategic choice. The lab that owns the substrate captures rents that the lab competing against the substrate cannot. The choice was Anthropic's bet that owning-as-open-standard is a stronger position than owning-as-closed-API. By 2026 the bet looks correct. OpenAI and Google adopting MCP rather than competing standards is the visible signal.

The structural read in late 2024 is to audit current AI-integration spend, identify the line items that are doing M×N work the protocol now handles for free, and budget the savings against the next renewal cycle. The vendor on the other side of those line items is, in operating terms, going to compress whether the customer recognizes it or not.

Anthropic open-sourced the plumbing. The roadmap got shorter. The procurement decision moves accordingly.

—TJ