Aschenbrenner wrote a forecast. The market read a budget.

TL;DR [show]

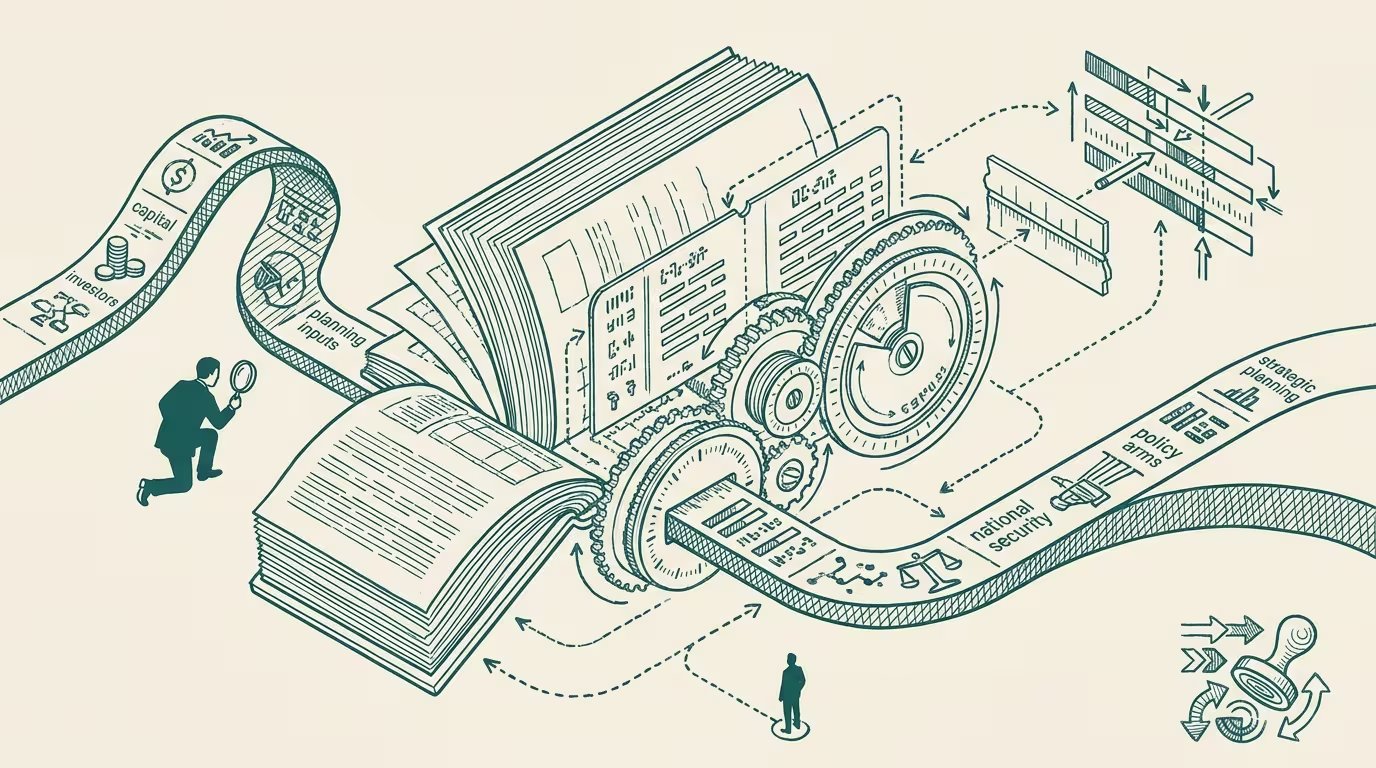

The 165-page 'Situational Awareness' essay is being read by VC LPs and DC staffers as a calendar, not a thesis. AGI-by-2027 is the worst-case planning input, not the base case; but if it's setting your competitor's hiring plan, you're already calibrating against it whether you read the essay or not.

In June 2024 Leopold Aschenbrenner, formerly of OpenAI's Superalignment team, posted a 165-page essay called "Situational Awareness: The Decade Ahead." The thesis is that AGI by 2027 is "strikingly plausible." The trendlines are compute and algorithmic-efficiency. The writing is dense, the argument is layered, the document is, as essays go, a serious one. Within ten weeks the essay had catalyzed a $1.5B hedge fund and become the standard reference document inside the Beltway AI-policy class.

The interesting thing is who is reading it.

VC LPs are reading it. They are reading it as a calendar. The thesis says AGI by 2027; the LP reads "AGI by 2027" and asks the GP a question about the fund's deployment pace, and the GP, who has now read the essay because the LP has, calibrates the deployment pace against the calendar. That conversation is happening in roughly half the AI-investing fund-of-funds reviews in mid-2024.

DC staffers are reading it. They are reading it as a budget. The thesis says the federal AI-infrastructure capex curve is binding to a national-security timeline; the staffer reads "national-security timeline" and asks the agency principal what their three-year R&D request looks like under Aschenbrenner-bracket assumptions. That conversation is happening in every AI-relevant office on the Hill.

The author wrote a forecast. The market read a budget.

Both readings are, of course, the same reading. A timeline you are obligated to plan against is, in operational terms, a budget. The interesting question is what an operator does about it.

The trap is to read the essay literally and lose a year arguing the timeline. The forecast may be right, may be off by twelve months, may be off by five years. Aschenbrenner's track record on past forecasts is not yet long enough to underwrite the literal version, and the timeline is contestable on the inside-OpenAI evidence the essay is built on. Operators who spend 2024 contesting "AGI by 2027 vs AGI by 2032" are operators who, by the end of 2024, have a debate club and no plan.

The operator move is to treat the essay as the worst-case planning input.

Worst-case planning input means: if you assume Aschenbrenner is right, what does your two-year roadmap look like, and what is the lowest-regret subset of that roadmap you would ship anyway? The lowest-regret subset is the part that pays off whether AGI lands in 2027, 2030, or 2035. Persistence-architecture for agent runtimes pays off either way. Compute-procurement-strategy decoupled from any single lab pays off either way. Hiring-class for AI-infrastructure-architects (a category that does not yet have a clean job-title) pays off either way. The operator who shipped those three things in 2024 wins regardless of which calendar is right.

The harder operator move is to recognize that the calendar is setting your competitor's plan. If your competitor is hiring against an Aschenbrenner-bracket assumption, you are calibrating against it whether you read the essay or not. A market in which roughly half the funds are deploying against AGI-by-2027 is a market in which the median competitor for AI-infrastructure hires has already priced the timeline. The operator who pretends the calendar is irrelevant is, in that market, the operator paying market-rate for talent that everyone else is paying premium-to-market for.

The honest summary is that Aschenbrenner is now the calibration check, not the prediction. If your AI plan reads consistent with the essay's calendar you are either bullish-aligned or unaware; if it reads against, you have to say which assumption you are rejecting. Either is fine. Refusing to say is not.

The essay does the work of forcing the assumption-set into daylight, regardless of whether the timeline holds. That is, of course, the most useful thing a forecast can do.

—TJ