The chatbot was an employee. Air Canada paid $650 for that ruling.

TL;DR [show]

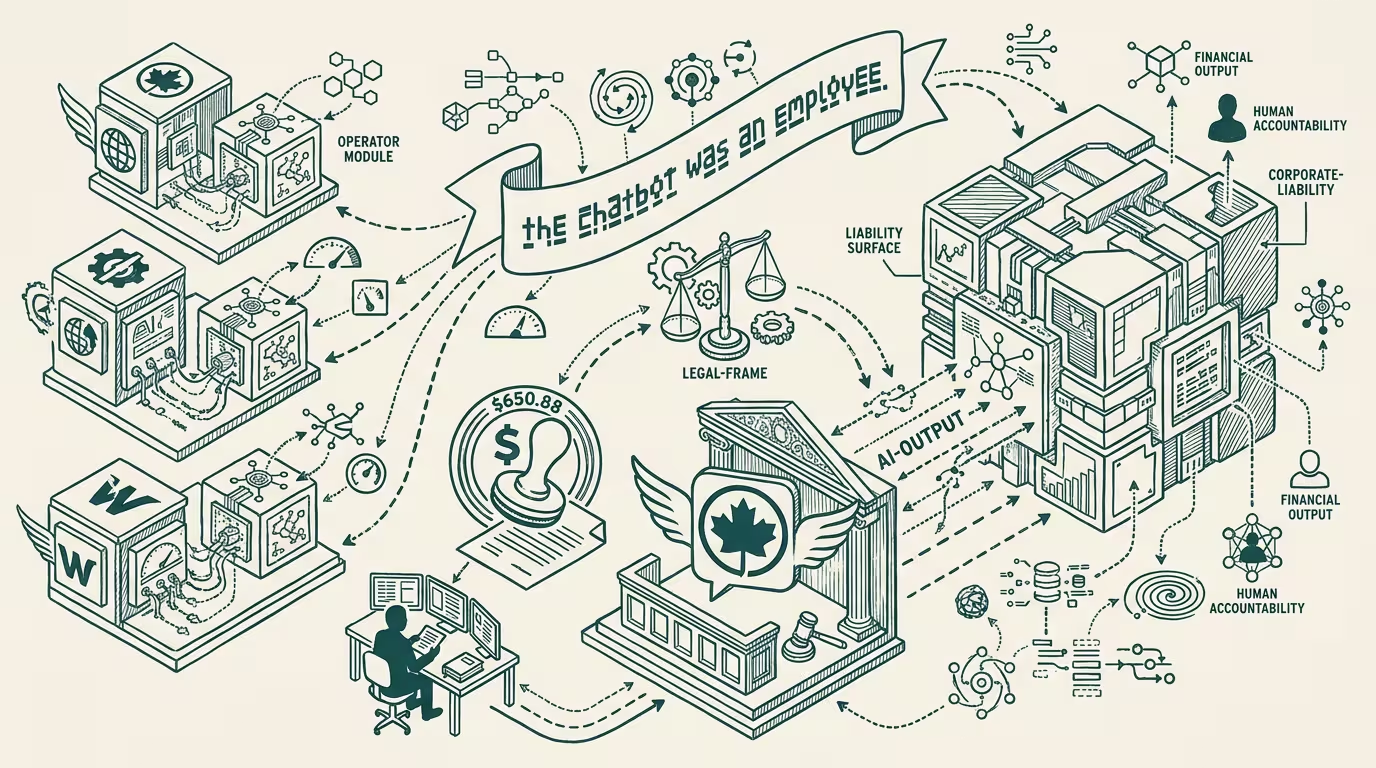

BC Civil Resolution Tribunal held Air Canada liable for misinformation produced by its website chatbot — the first Western precedent that companies own their AI's outputs. Operator read: the 'separate legal entity' defense is dead, and your deployed-LLM liability surface is the same as your front-line employee liability surface. Three places the operator playbook has to change.

In February 2024 the British Columbia Civil Resolution Tribunal held Air Canada liable for misinformation produced by its website chatbot. The amount was $650.88, which is roughly the price of a discounted bereavement fare. The damages were for the difference between what the chatbot promised the passenger and what the airline's actual policy was. The chatbot had told the passenger he could buy a regular fare and apply for a bereavement-rate refund afterward; the airline's actual policy required the bereavement rate be claimed at booking. The chatbot was wrong. The passenger acted on it. The airline argued, with a straight face, that the chatbot was a "separate legal entity" whose statements the airline could not be held responsible for. The Tribunal disagreed.

It was a small ruling about a small amount with a large operator-class implication, which is why the trade press did not give it much oxygen. The implication: companies own their AI's outputs. That is the first Western precedent on a question every operator deploying an LLM-customer-facing-anything has been quietly hoping would never get answered. It got answered for $650.88.

The reasoning is structurally interesting. Air Canada's "separate legal entity" defense is the natural defense: the chatbot is software, software is tooling, tooling does what it does and the operator is a downstream user not an upstream author. That structure works for, e.g., a calculator on a website. It does not work for a chatbot that provides bespoke responses to customer questions, which is to say, the whole reason a chatbot exists. The chatbot was deployed by Air Canada, branded as Air Canada, presented to the customer as part of the Air Canada experience. The Tribunal's reasoning: a customer interacting with that interface has every reason to believe the responses represent Air Canada policy, and the airline cannot disclaim responsibility by pointing at the model card.

That is the operator-tier lesson. Your deployed-LLM liability surface is the same as your front-line-employee liability surface. If a human Air Canada call-center agent had given the same incorrect bereavement-fare advice, Air Canada would have absorbed the $650 and moved on. The Tribunal applied the same standard to the chatbot. That should have been the obvious outcome. It became the obvious outcome only when the case actually got tried.

Three places the operator playbook has to change.

First, the routing. Operator-deployed LLMs need to know which questions to refuse to answer because they are policy questions, and they need to know that the policy questions extend further than any vendor's content-moderation rubric anticipates. "Send me to a human if you're not sure" routing is going to become more conservative everywhere.

Second, the audit trail. Every operator who deployed a chatbot in 2023 needs a log of what the chatbot said, to whom, and when. Air Canada's internal counsel was certainly happy that the Tribunal's analysis turned on a screenshot of the actual conversation. The next case will turn on the same evidence; the operator without the log loses by default.

Third, the contract with the LLM vendor. The vendor's commercial agreement, in early 2024, almost universally disclaims responsibility for outputs. That disclaimer worked when the LLM was a developer tool. It does not work when the LLM is a customer-facing employee, and the operator's general counsel will start pricing the indemnification gap into procurement decisions within twelve months.

thing that crosses pillars is operator-level. Every category that has been quietly running an LLM-as-customer-service deployment is exposed: airlines (Air Canada is the precedent, every other carrier is next), retailers (the chatbot-on-the-product-page model), banks, telcos, healthcare providers running symptom-checkers, insurance companies running claims-adjuster chatbots. The category that learns the lesson first is the category that does not get the second case.

The Tribunal's ruling lands at $650.88. The operator implication is six to seven figures per misstatement, depending on the deployment. The asymmetry is the ruling.

—TJ