The first wave of agent failures will look like infrastructure failures.

TL;DR [show]

When the first wave of agent-stack failures hits the news, the symptoms will read as ordinary incidents (latency, outage, retry storm) rather than 'AI broke.' Names the four substrate-level failure modes the post-mortems eventually settle on, and what an operator running production agents should be checking for now.

I have been running agents in production long enough to know what their first wave of failures is going to look like, and the news cycle is not going to read it correctly. The first wave is going to look like infrastructure failures. Latency spikes. Outages. Retry storms. Database-connection-pool exhaustion. The post-mortems are going to read like SRE post-mortems, the symptoms are going to map to ordinary distributed-systems failure modes, and the trade press is going to write the headlines as "AWS outage took down agent-X" or "Anthropic API latency caused agent-Y problems."

That framing is going to be technically accurate and operationally wrong.

Technically accurate because the symptoms really will be infrastructure-shaped. Operationally wrong because the substrate-level failure mode is something different, and what the post-mortems eventually settle on is going to take three to five quarters to surface, and operators who read the early failures as "AI is unreliable" will draw the wrong conclusions about which architectural patterns to defend against.

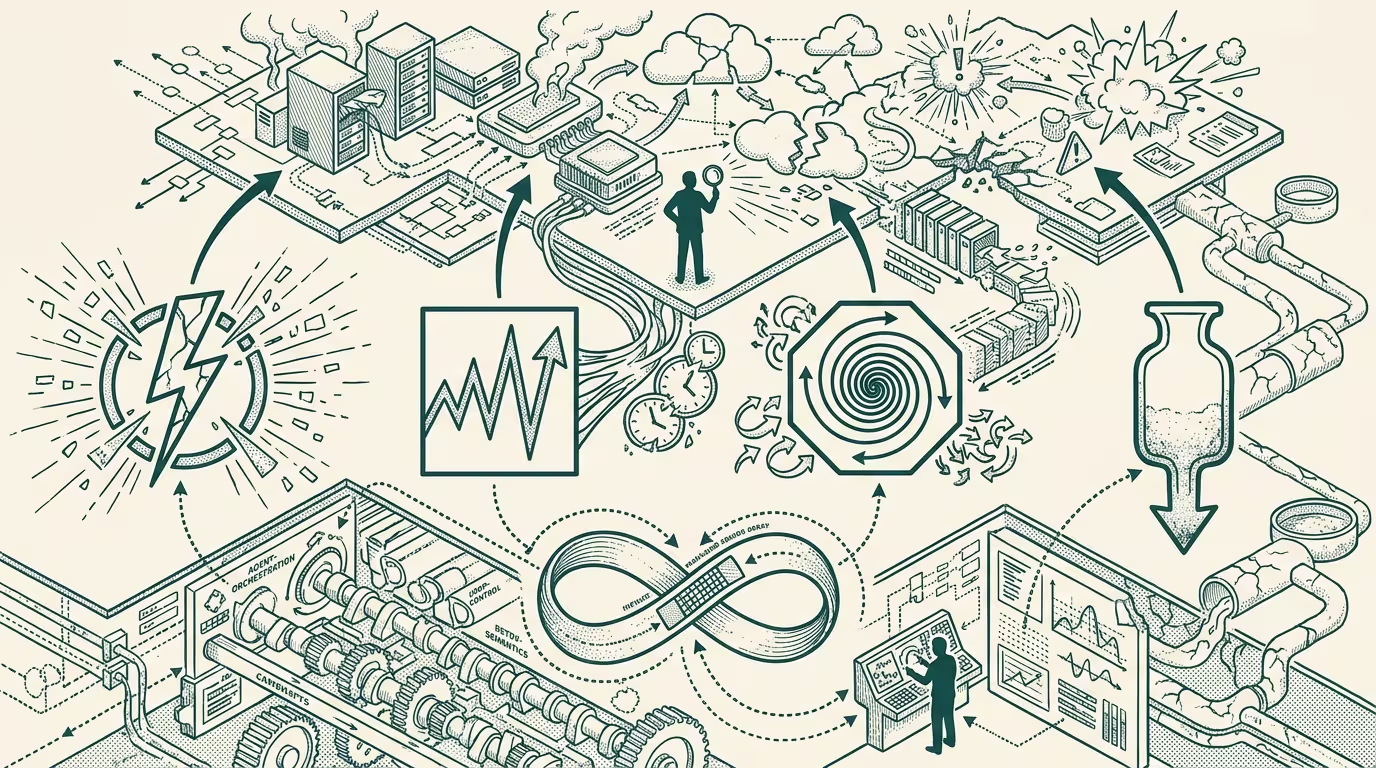

Four substrate-level failure modes are worth naming now.

First, the persistence cliff. Agent runtimes that did not invest in cross-call state persistence are going to fail when a model deprecation cycle lands. The agent is mid-workflow, the model gets deprecated, the runtime tries to fail over to a new model, the state that was implicit in the old model's context-window is gone, and the workflow restarts from a checkpoint that does not exist. The post-mortem will read "model API returned errors during deprecation window." The actual failure was that the runtime did not persist enough state to survive the model layer changing underneath it. This is the failure mode that hits hardest in 2025 when the first round of major model deprecations actually happens.

Second, the tool-use blast radius. An agent that can call tools is an agent that can, in the failure case, call the wrong tool, call the right tool with the wrong arguments, or call the right tool with the right arguments at the wrong time. None of those are AI failures in the traditional sense. They are authorization-and-scoping failures. The post-mortem will read "agent deleted records it should not have had access to," and the framing will be that the AI made a mistake. The actual failure is that the tool-use surface was scoped too broadly relative to the agent's reliability, and the operator did not enforce a least-privilege boundary. This is the failure mode that drives the first wave of regulatory questions about agent autonomy.

Third, the cascading-context corruption. Multi-step workflows where each step's output feeds the next step's input have a failure mode where one bad step propagates through the rest of the chain, and the human reviewing the final output cannot tell that the third step was wrong because the fourth and fifth steps incorporated the third's error and rationalized around it. The post-mortem will read "agent produced low-quality output." The actual failure is that the runtime did not validate intermediate outputs against a sanity-check, and the error cascaded silently. This is the failure mode that hits coding agents and research agents hardest.

Fourth, the silent-degradation pattern. An agent's effectiveness is, in production, measured by some downstream metric (task completion rate, accuracy on a benchmark, customer satisfaction). When the metric drifts, it usually drifts gradually because the underlying model has been updated, the data the agent reads has shifted, the user population has changed, or some upstream API has subtly changed its response shape. The post-mortem will read "agent performance regressed in Q3" and the regression will be attributed to the model update. The actual failure is that the agent's evaluation harness was not running continuously against a stable benchmark, and the regression was visible in the metrics for six weeks before anyone caught it. This is the failure mode that hits the largest number of agent deployments and gets attributed to "AI quality issues" in the trade press.

What the post-mortems eventually settle on, after three to five quarters of these failures hitting in production at scale, is that the agent-runtime layer needs the same kind of architectural discipline that distributed-systems engineering has been applying to cloud infrastructure for two decades. Persistence boundaries. Least-privilege scoping. Intermediate-output validation. Continuous-evaluation harnesses. None of those are AI-specific concepts. They are infrastructure concepts, applied to a substrate that did not yet have them in 2024.

The operator-class implication is that the operator running production agents in 2024 should be auditing for those four failure modes now, before the news cycle catches up. The persistence question is: what state does my agent need to survive a model deprecation, and is it actually stored somewhere that will survive? The tool-use question is: what is the smallest authorization scope my agent needs, and have I enforced that scope, or am I trusting the agent's good behavior? The validation question is: when my agent produces an intermediate output, what is the check that catches the bad case before it propagates? The evaluation question is: is my benchmark running continuously, and would I notice a 5% regression within a week of it landing?

The operators who answer those four questions in 2024 are the operators whose agent stacks survive the failure wave that hits in 2025-2026. The operators who answer them after the failure wave are the operators rebuilding their agent stacks in 2026-2027 against a regulatory environment that has, by then, codified the answers as compliance requirements.

The framing the trade press will adopt is that AI failures are AI failures. The framing the operator should adopt is that the first wave of agent failures is an infrastructure-engineering problem, and the operators who treat it that way are the operators whose agents are running in 2027 while the competitor's are not.

The receipts on this forecast will, of course, roll in across 2025 and 2026. They are going to read like SRE post-mortems. They are going to be SRE post-mortems. The operator who recognizes the substrate-level pattern early is the operator who is not in any of them.

—TJ