The 'long-context window' was always a transition primitive.

TL;DR [show]

The million-token context window will look in retrospect like the twenty-megapixel sensor — a number that mattered for two years and stopped mattering once the rest of the stack caught up. Three primitives are taking its place: durable memory, structured retrieval, and tool-resident state. Each does what context length pretended to do, and each is what the model layer should have been building all along. The window will keep getting longer for marketing reasons. It will stop being the metric that decides products.

The million-token context window is the twenty-megapixel sensor. It is a transition primitive that mattered for two years, drove a brief race, sold a brief generation of products, and stopped mattering once the rest of the stack caught up to what the number was a proxy for. The window will keep getting longer for marketing reasons. It will stop being the metric that decides products.

The reason the context window mattered at all is that the model layer in 2023 and 2024 had no other place to put the things a real workload requires the model to remember. The model could not durably remember anything between turns. The retrieval layer was either nonexistent or hand-rolled. The tools the model could call did not hold state. Everything that needed to persist into the next reasoning step had to be carried in the prompt, and the prompt had a length limit, and the length limit decided what kinds of work the model could even attempt. So the lab that shipped the longer window unlocked a class of workloads the shorter window could not host. The window was the only address space the model had.

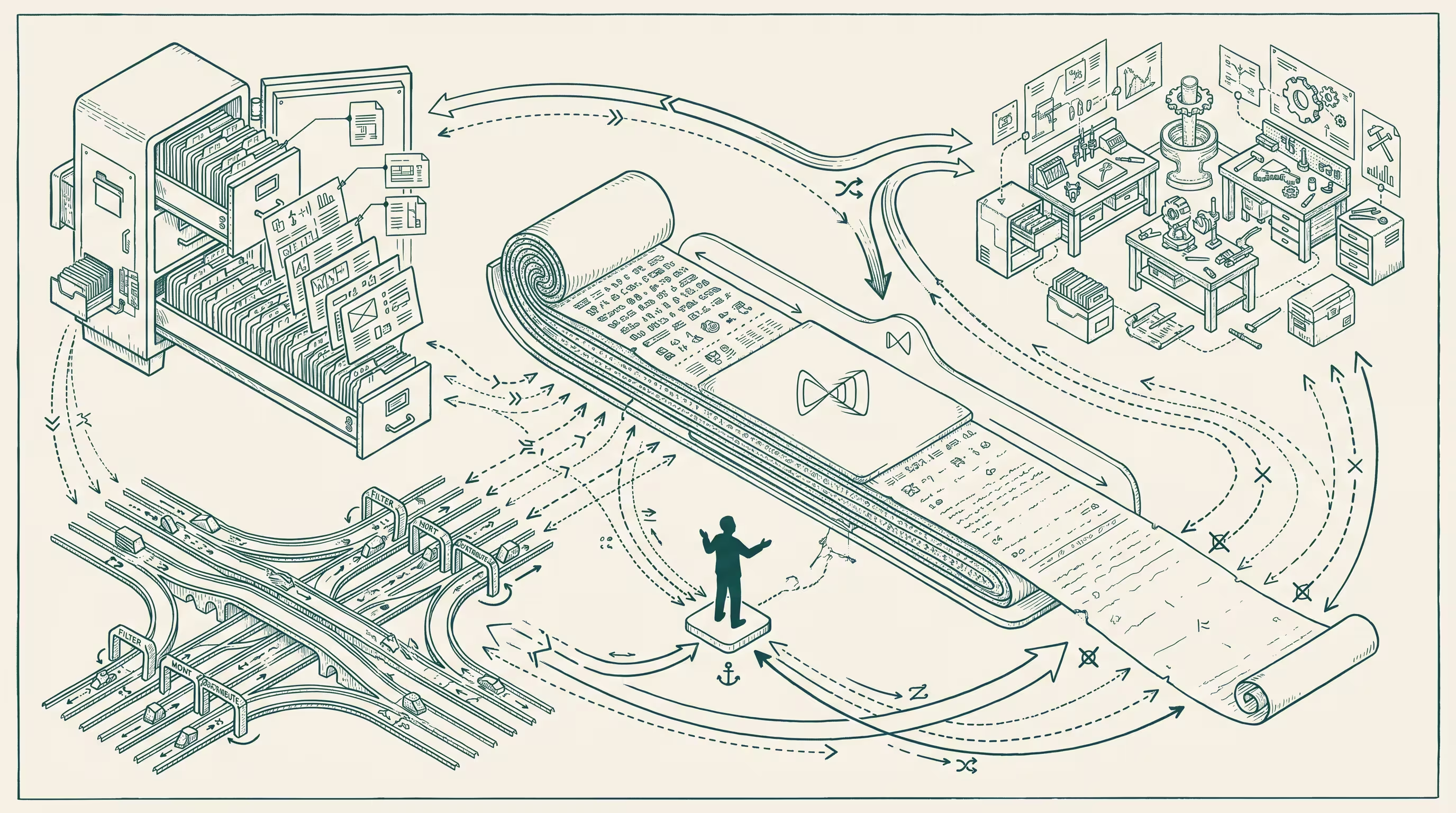

That window-as-address-space arrangement was a transitional shape, and the transition is mostly over. Three primitives are taking the window's job. Each does what the window pretended to do, and each does it better the moment the rest of the stack supports it.

The first primitive is durable memory. Not a chat-history transcript dumped into the prompt; a model-side store the model writes to and reads from across sessions, the way a knowledge worker writes to a notebook and reads from the notebook later. The store has a schema. The store has retention rules. The store has access controls scoped to the principal the model is acting on behalf of. When the model needs to remember that the user prefers aisle seats and Tuesday-morning meetings, the model does not page back through the last six conversations to reconstruct the preference. The model reads the preference. The window does not have to be long enough to hold the user's life because the model layer has somewhere durable to put the life.

The second primitive is structured retrieval. Not retrieval-augmented generation as it was first practiced, where the system stuffs five chunks of source documents into the prompt and hopes the model uses the right one. Structured retrieval is closer to a database query than a search query. The model knows what shape the answer should take, knows which collection holds the source-of-truth for that shape, queries the collection with a typed predicate, and gets back a typed result that does not have to be parsed back out of free text. The query and the result are first-class objects with a schema. The window does not have to be long enough to hold the candidate documents because the retrieval layer returns the answer rather than the haystack.

The third primitive is tool-resident state. The agent is using a calendar tool, a payments tool, a code-execution tool, a browser tool. Each tool has a session, the session has a state, and the state is owned by the tool, not by the prompt. When the agent is two steps into a multi-step transaction with a payments rail, the rail knows where the agent is. The agent does not need the prompt to recapitulate the whole transaction history each turn. The agent asks the tool for its state and proceeds. The window does not have to be long enough to hold the working memory of a long-running task because the tools the task is running through hold their own working memory.

I want to acknowledge the obvious objection. The context window is not going away. Coding agents that read and modify large source trees benefit from a long window. Document-analysis workloads that need to reason across hundreds of pages of evidence benefit from a long window. Conversational interfaces that want to be forgiving about when the user changes the subject benefit from a long window. The window has a tail of legitimate uses, and the tail is not short.

The forecast is not that the window stops existing. The forecast is that the window stops being the metric. In 2024 the labs raced on context length because the length was a proxy for what kinds of workloads the model could host. In 2026 and 2027, the labs that win will race on memory quality, retrieval typing, and tool-state coherence — three primitives that were under-built for a stretch because the window let the model paper over the gaps. Once the primitives are real, the window's job is done.

The product implication is that the buyer should stop reading the context-length number on the model card and start reading the memory layer's schema, the retrieval layer's query interface, and the tool layer's state contract. The model with the second-longest window and the best memory store is going to host a deeper set of workloads than the model with the longest window and no durable memory. The benchmark that measures cross-session preference adherence is more predictive than the benchmark that measures needle-in-a-haystack recall at one million tokens. The buyer who optimizes for the right metric ships products that compound.

The architectural implication is that the model layer is splitting into a model-runtime (does the inference), a memory-runtime (does the persistence), a retrieval-runtime (does the structured fetch), and a tool-runtime (does the side-effects). Each of those will be a separately purchasable primitive with a separately measurable quality profile. The labs that ship one bundle that does all four are going to compete with the labs that ship best-of-breed primitives glued together by a competent agent layer, and the competition will be decided on the quality of each individual primitive rather than the size of any single number.

The piece I want to write a year from now is the one that says which primitive turned out to be the hardest to get right. My current bet is the memory runtime. The retrieval pattern has decades of database research behind it. The tool-state pattern has decades of distributed-systems research behind it. The memory pattern has months of ad-hoc experimentation behind it, and the part of memory that matters most — knowing what to remember, what to forget, how to update a stored belief when new evidence arrives, how to merge memories from concurrent sessions without losing the principal's intent — does not have a clean precedent in the existing literature. The lab that gets memory right is the lab that turns the post-window architecture into product reality.

The window was the right primitive for the moment when the model had nowhere else to put anything. The moment is ending. The number on the model card was always a stand-in for the architecture that comes after. The architecture is what the model layer should have been building all along, and the next two years are the years when it gets built.

—TJ