On the medical-school admissions algorithm reform.

TL;DR [show]

The post-affirmative-action reform of US medical-school admissions algorithms in late 2024 produced a class of changes that read as substantial in the trade press and looked thin in the early data. The schools that ran the most ambitious reforms got the most coverage and the smallest measurable effect. The schools that left their algorithms mostly intact and changed two upstream variables got the largest measurable effect. The shape of the reform is the inverse of what most observers expected, and it is the second variable that explains it.

The post-affirmative-action reform of US medical-school admissions algorithms in late 2024 produced a class of changes that read as substantial in the trade press and looked thin in the early data. The schools that ran the most ambitious reforms got the most coverage and the smallest measurable effect. The schools that left their algorithms mostly intact and changed two upstream variables got the largest measurable effect. The shape of the reform is the inverse of what most observers expected, and it is the second variable that explains it.

The most-covered reforms were the algorithm rewrites. Several schools, including some prominent state systems, took the post-ruling moment as an opportunity to overhaul the weighting of their admissions rubrics: lower weight on standardized test scores, higher weight on letters of recommendation, higher weight on a structured interview score, higher weight on socioeconomic-context factors that the legal reading of the ruling permitted as proxies. The rewrites were defensible. The rewrites were also thoroughly relitigated in the public conversation, with each school's choices treated as a referendum on what diversity in medical education should mean. The trade press loved them.

What the trade press did not focus on was that the rewrites produced small effect sizes in the first cycle of post-ruling admissions data. The composition of the entering classes at the schools that ran the most ambitious algorithm rewrites was, on the dimensions that mattered most to those schools, only marginally different from what the same algorithm would have produced under the old regime. The rewrites moved a few percentage points. They did not move the distribution.

The schools that moved the distribution did not rewrite the algorithm. They changed two upstream variables and kept the algorithm largely intact. The first upstream variable was the candidate pool: aggressive recruiting in geographic and institutional contexts where the school had been under-recruiting for a decade, paired with funded-application waivers that removed the cost barrier from applicants for whom the cost was actually a barrier. The second upstream variable was the rubric for what counted as relevant clinical experience, which got broadened from "clinical experience that maps to a traditional pre-med pathway" to "clinical experience that maps to any service-delivery context where the candidate spent enough hours to know what care delivery actually looks like." That second variable is the one that did the work.

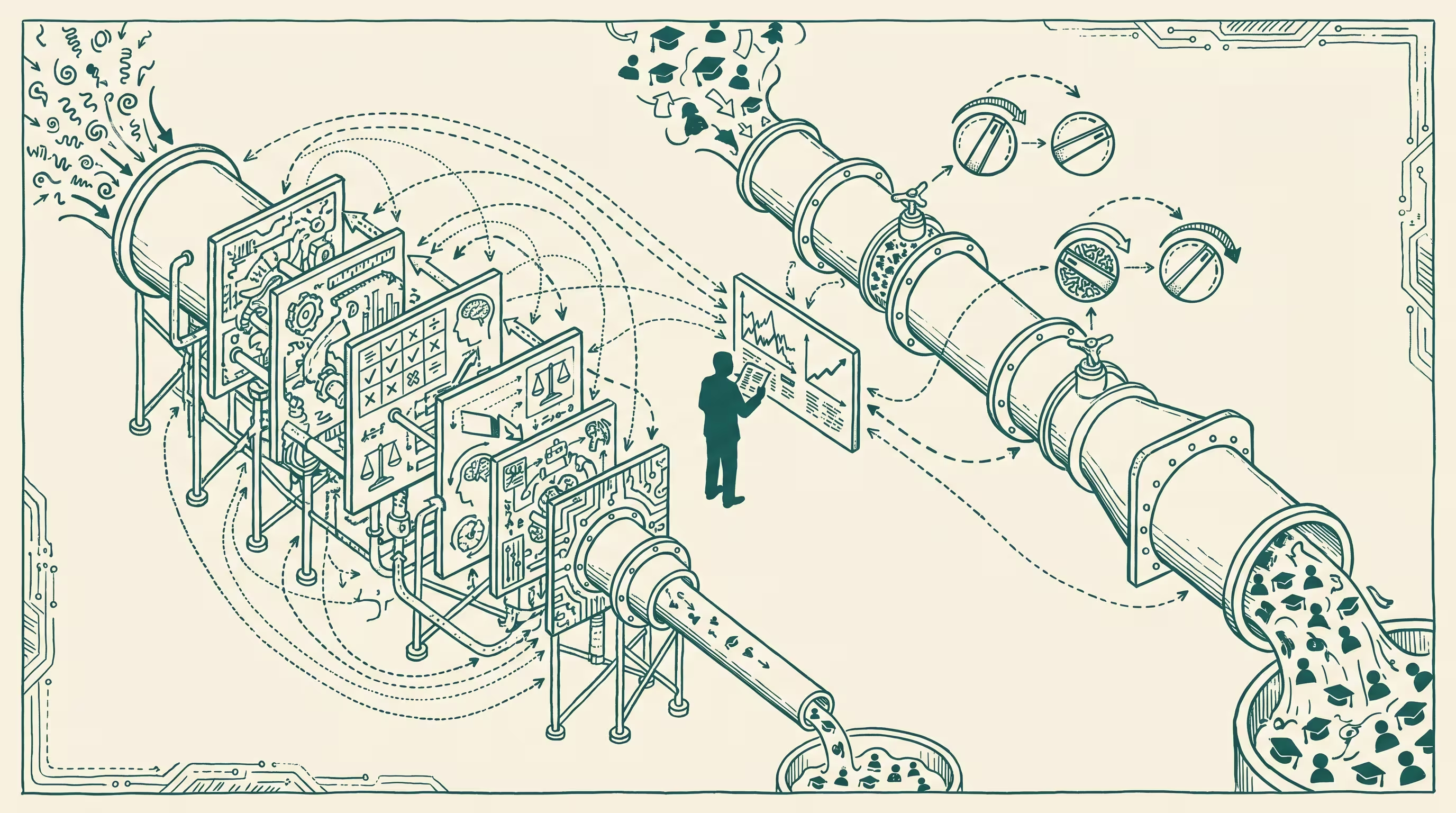

Broadening the clinical-experience rubric mattered for a structural reason that is not specific to medical school. Selection algorithms, when run on populations with similar inputs, produce outputs distributed similarly to the inputs. The output diversity is upper-bounded by the input diversity. Rewriting the algorithm without changing the inputs produces the rewrites' modest effect sizes. Changing the inputs without rewriting the algorithm produces a different distribution of outputs because the algorithm now has different things to choose between. The candidates who came through community-health programs and rural-hospital aide pipelines and EMS routes and pharmacy-tech roles were always there. They were screened out at the rubric step and never reached the algorithm. Letting them reach the algorithm was the change that mattered.

The schools that did this work are worth looking at carefully. One of them, a state system in the Midwest, had been graduating one or two students from the relevant pipelines per year for a decade. After the rubric change they were graduating ten to fifteen. The pipeline candidates had been there the whole time. The school had been measuring them against a relevance-of-experience filter calibrated to a population that did not include them. Once the filter was recalibrated, the algorithm did the rest of the work without complaint. The discovery, the part that compounds, is that the algorithm had nothing to do with the result. The result was decided three steps upstream, in the office where someone defined what "relevant clinical experience" meant. The school never had to touch the algorithm. The upstream office had been holding the lever the whole time.

The early-data takeaway is that the algorithm-reform discourse was looking at the wrong layer. The layer that mattered was the upstream filter that decided which candidates the algorithm got to evaluate. The schools that focused on the algorithm got the press cycle. The schools that focused on the upstream filter got the result.

There is a cleaner version of this lesson outside admissions. Any selection process that runs on a homogeneous input distribution will produce a homogeneous output distribution, regardless of how the selection process is tuned. Tuning the process is the visible work. Diversifying the input is the work that compounds. The medical-school admissions reform is going to be a case study for this distinction for the next decade, and the case study will read better the longer the data accumulates.

—TJ