The next wave of foundation models will get the deployment layer wrong. The capex cycle guarantees it.

TL;DR [show]

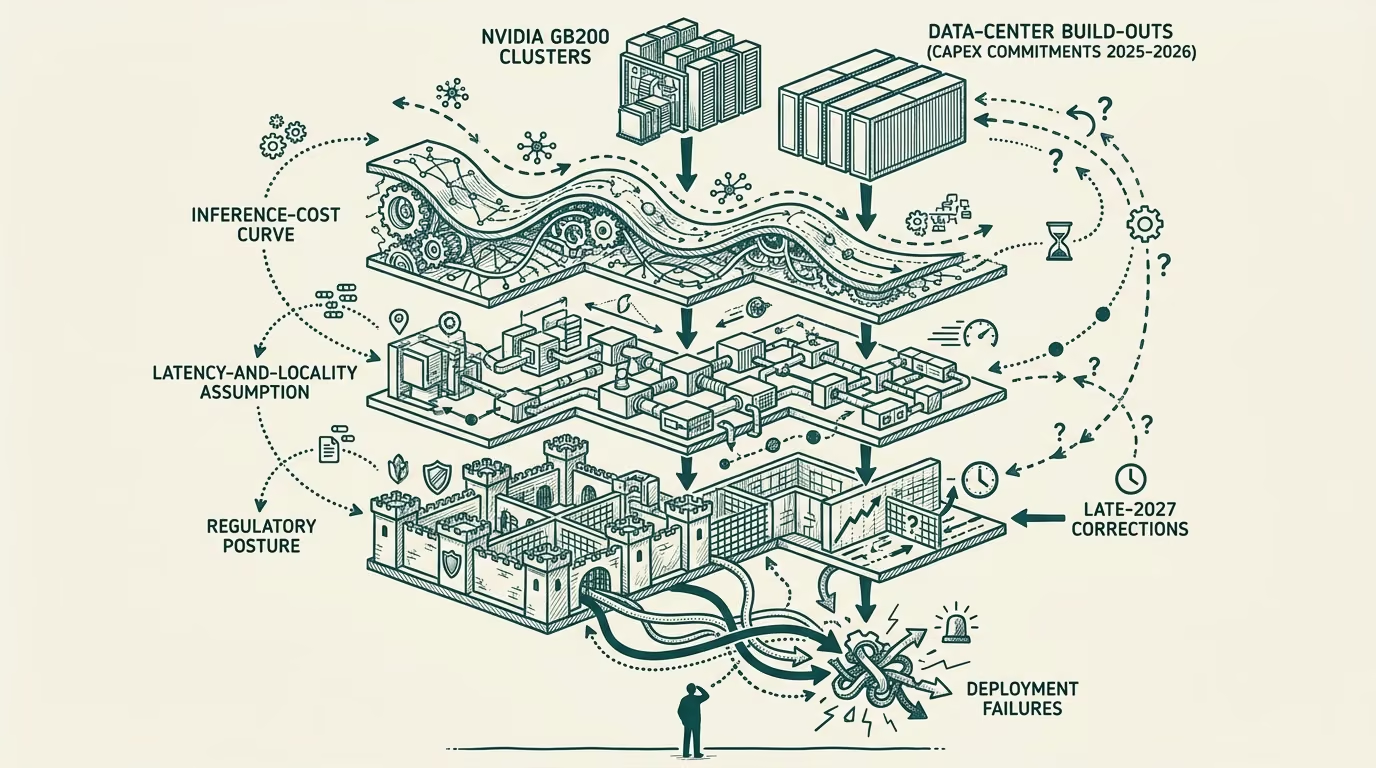

An advisor letter, written in early 2026, looking forward to the late-2027 retrospective on the foundation-model wave currently being trained and deployed. The benchmarks they will top are not the things they will be remembered for. The deployment-layer mistakes the capex cycle is making right now are. ADR-0031 mode-three inevitability register. The argument runs through three structural mistakes already locked in by the capex commitments of 2025: the inference-cost curve, the latency-and-locality assumption, and the regulatory posture. Forward-looking, conditional, register-loaded.

To the founder I had drinks with last week, and to the half-dozen of you running adjacent companies who have been asking the same question.

You asked what I think the late-2027 retrospective on the current wave of foundation models will say. I have been turning the question over for months, and I think the honest answer is the one nobody on the model-training side wants to hear. The benchmarks the next wave is topping are not the things the wave will be remembered for. The deployment-layer mistakes the capex cycle is locking in, right now, in 2025 and into 2026, are the things the late-2027 retrospective will fix on. Three of them, in particular. I will walk them in the order the capex cycle is committing to them, with the structural reason each is hard to course-correct once committed.

This is a forward-looking letter in the carve-out sense of [ADR-0031](decisions/0031-futurist-register-carveout.md). I am not predicting; I am laying out the structural pressures that, if they hold, produce the outcome the letter describes. Read it as a conditional argument, not a prophecy. Push back where you see the conditional failing.

The inference-cost curve assumption

The first locked-in mistake is the cost-curve assumption baked into the next-wave deployment plans. The capex commitments of 2025, which include the multi-billion-dollar data-center build-outs at the major training labs, the Nvidia GB200-class cluster orders shipping through 2026, and the long-term power-purchase agreements for the new compute footprint, were modeled against a particular assumption about where inference cost lands on the cost curve in late 2026 and into 2027. The assumption is that inference cost per useful task drops at a rate consistent with the 2023-2024 trajectory, that the demand grows at a rate that absorbs the capex, and that the unit economics of the deployment layer remain stable enough to amortize the build.

The first of those holds. The second, on current evidence, does not. The demand-curve for high-end inference is bifurcating into a high-margin enterprise tier (where the customer values a marginal accuracy improvement enough to pay non-commodity prices) and a commodity tier (where the customer is indifferent between the frontier model and a model two generations behind, because the two-generations-behind model is good enough for the workload and costs an order of magnitude less). The capex was sized for a single-tier-with-headroom market. The market is producing a two-tier shape with a structurally different revenue curve. The capex-amortization assumption was sized against the wrong shape.

The retrospective on this in late 2027 will note, with some precision, which labs read the bifurcation early and re-aimed their deployment-tier offering at the high-margin enterprise slice, and which labs continued to assume the commodity tier would continue to monetize at premium prices because of brand. The labs that read it early recover. The labs that did not run into the structural problem that their inference revenue does not, in fact, amortize the data-center capex, and the financial reorganization happens in 2027-2028.

The mistake is not in the model. The mistake is in the cost-and-revenue model of the deployment layer the capex was sized against.

The latency-and-locality assumption

The second locked-in mistake is the latency-and-locality assumption built into the inference architecture. The current wave of deployment is centralizing inference into large multi-region data centers, with the assumption that the customer-side latency budget for a useful agentic task remains generous (single-digit seconds, sometimes more) and that the locality requirements (data sovereignty, on-premises preference, edge-to-cloud bandwidth) remain satisfiable by the regional-data-center pattern.

That assumption holds for the 2024-2025 generation of customer workloads, which were dominated by chat-class interactions where the human is the latency floor and three seconds is fine. It does not hold for the 2026-2027 generation of agentic and embedded workloads, where the agent is the latency floor and the cumulative latency budget for a multi-turn agentic task running across a workflow is sub-second, sometimes sub-hundred-millisecond. The customer needs the inference closer to the agent, and the agent is sometimes on a device, sometimes in a regional cloud, sometimes in an environment with no reliable connection back to the central data center at all.

The capex of 2025-2026 is committed to centralized inference in a way that is structurally hard to redirect to edge and on-device deployment. The training labs are not staffed for the on-device-optimization work; the partnerships with the device manufacturers (Apple, Google, Samsung, Qualcomm) are loosely defined; the model-architecture choices (parameter counts, attention patterns, KV-cache sizes) were optimized against centralized-inference economics, not on-device economics. The labs that committed to dual-architecture work in 2024-2025 have an option-value position in the late-2027 deployment landscape. The labs that bet entirely on centralized inference do not.

The retrospective will note that the on-device-and-edge inference layer became a meaningful share of the total inference market by mid-to-late 2027, and that the labs whose architectures and partnerships did not flex to it lost a chunk of the deployment-layer market they had been counting on. The mistake is in assuming that latency and locality are stable assumptions when the customer-workload mix is shifting to a regime where they are not.

The regulatory-posture assumption

The third locked-in mistake is the regulatory posture the current wave is being deployed into. The training labs and their deployment partners through 2025 are operating against a regulatory environment shaped by the EU AI Act provisions (most coming into force through 2025-2026), the U.S. executive-order-and-state-level regulatory patchwork, the China data-and-export regimes, and the emerging healthcare-and-finance vertical regulations. The current-wave deployment is being shaped against this environment as the static target.

The structural problem is that the regulatory environment is not static and the direction of motion is consistent. The 2026-2027 trajectory is toward stricter audit, traceability, and use-case-specific certification requirements, with the certification regimes converging across jurisdictions on a few common patterns (model-card standards, evaluation-and-benchmark traceability, data-provenance documentation, deployment-context risk classification). The labs that built the audit-and-traceability tooling into their deployment stack early carry a meaningful structural advantage. The labs that treated regulatory work as a compliance afterthought to be addressed when forced run into the late-2027 problem that the regulatory bar has moved past their ability to retrofit, and the customers they wanted to serve in the regulated verticals (healthcare, finance, government, education) are no longer reachable without a multi-quarter remediation project.

The retrospective will note that the labs that ran the regulatory-posture work as a first-class engineering project, with dedicated staffing and product-tier integration, captured the high-margin regulated-vertical revenue. The labs that ran it as compliance-and-legal, downstream of the engineering decisions, lost it. The regulated verticals are where the revenue is in 2027-2028, and the deployment-layer architecture you built in 2025-2026 either supports them or it does not.

The mistake is in posturing against the static-regulatory snapshot rather than the regulatory-trajectory motion.

What I would do if I were running one of these labs

Here is the part of the letter that is closer to advice than to retrospective. If you are running one of the current-wave labs, or one of the deployment-tier companies sitting on top of them, the leverage in 2026 sits in three places.

First, run the cost-and-revenue model against the bifurcated market shape, not the single-tier shape. Re-examine whether the deployment-tier offerings you are building are sized against the commodity tier or the enterprise tier, and stop pretending you are serving both with a single product line. The unit economics for the two tiers are structurally different, the customers are structurally different, and the go-to-market for each is structurally different. Pick which one you are building for, build for it well, and partner or step out of the other.

Second, build the on-device-and-edge optionality now. This requires re-staffing some of the architecture and optimization team, building partnerships with the device-class manufacturers, and accepting that the on-device economics will be lower-margin per task at higher volume. The labs that build this capacity have a position in the 2027-2028 deployment landscape that the labs that do not build it cannot recover. The capex committed today does not have to be capex that constrains your 2027 architecture if the engineering and partnership work runs in parallel.

Third, treat the regulatory work as a first-class engineering project. The audit-and-traceability tooling, the evaluation-and-provenance documentation, the deployment-context risk infrastructure: these are engineering investments that compound. Build them now while the regulations are still forming, not later when they are already binding. The labs that did this in 2024-2025 are already showing it in their enterprise-deal velocity. The gap will widen.

The current wave of foundation models is impressive in ways the benchmarks capture and impressive in ways they do not. The retrospective in late 2027 will not be primarily about the benchmarks. It will be about the structural decisions the deployment-layer made or did not make, with the capex cycle of 2025 as the constraint that shaped the option space.

I am writing this letter because I have watched the same shape of mistake unfold in two prior infrastructure cycles. The companies that read the deployment-layer wrong did not run out of capability; they ran out of the operating environment to deploy the capability into. The capability is genuinely there in the next wave. The deployment-layer environment is the part that is being underbuilt, and the underbuild is the structural reason the late-2027 retrospective will read the way I think it will.

Push back where you disagree. The conditional is the conditional, and I am open to being wrong about which structural pressures hold and which do not. The shape of the argument is what I am most confident about. The specific landing dates are a guess.

Yours in the operator-class trenches,

TJ

—TJ