OpenAI o1 introduced slow thinking. The operator question is where slow thinking earns its 4x premium.

TL;DR [show]

OpenAI's o1 release in September 2024 introduced the reasoning-model class to the foundation-model market. The capability gains on math, code, and complex-reasoning benchmarks were substantial; the per-token cost premium was roughly 4x against the previous GPT-4o tier. The trade-press coverage of o1 concentrated on the capability gains; the operator-class question of where the 4x premium earns itself back in production was generally not surfaced. Medium essay walking the capability, the cost framing, the two sectors where the math works, and the broader category where it does not.

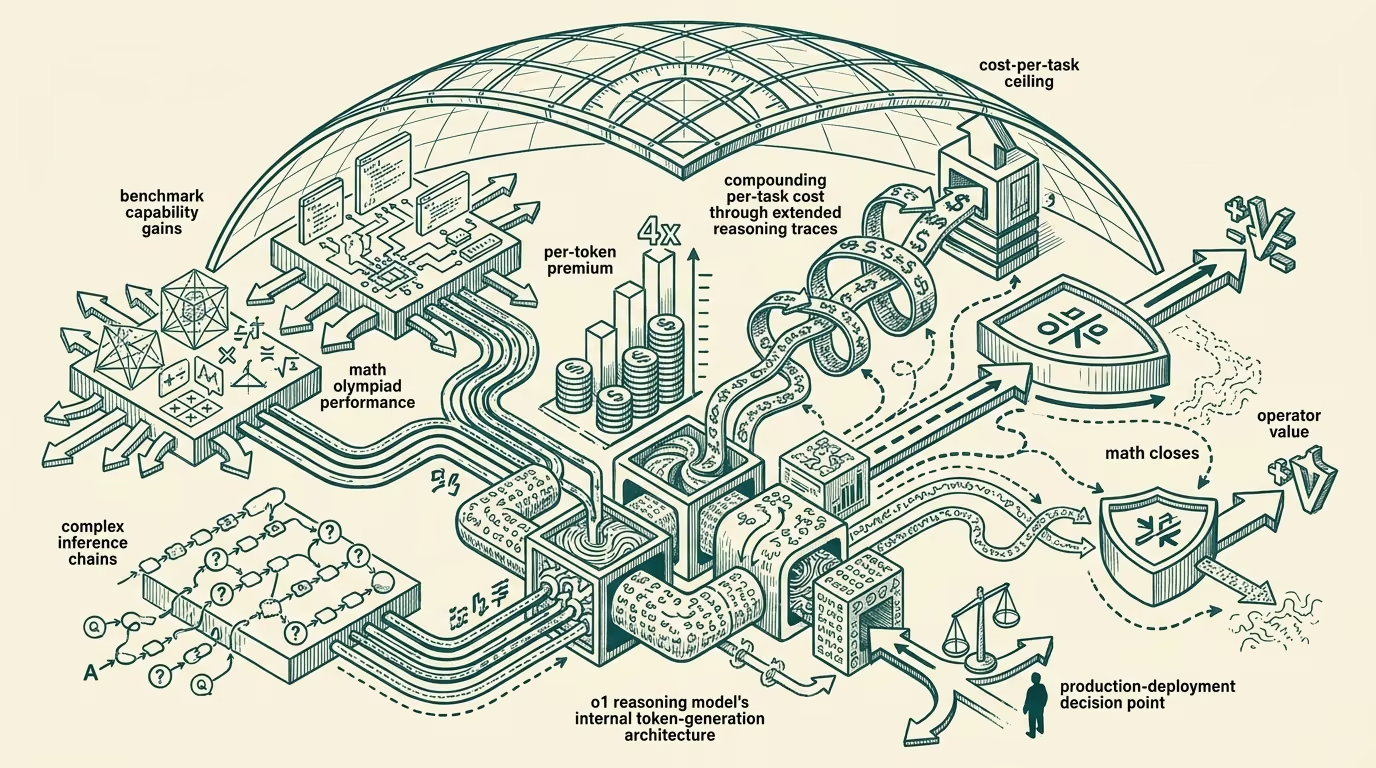

OpenAI released the o1 model family in September 2024, introducing the reasoning-model class to the foundation-model market. The capability gains on math, code, and complex-reasoning benchmarks were substantial relative to the previous GPT-4o tier, with the public benchmark numbers showing roughly 83 percent on the math olympiad qualifying-class problems against GPT-4o's roughly 13 percent. The per-token cost premium for o1 against GPT-4o ran at roughly 4x at launch, with the actual per-task cost premium being higher because o1 generated more tokens per query through the reasoning trace.

The trade-press coverage of o1 concentrated on the capability gains, with the broader interpretation framing reasoning models as a meaningful step-change in the foundation-model trajectory. The coverage did not generally engage with the operator-class question that should have been load-bearing in any production-deployment evaluation: where does the 4x premium earn itself back, and where does it not.

This essay walks the capability, the cost framing, the two sectors where the math works in operator-tier deployment, and the broader category where it does not.

The capability and the cost framing

The o1 reasoning capability is genuine. The model produces substantially better results than GPT-4o on tasks that require multi-step reasoning, complex math, structured planning, and tasks where the answer depends on chained inferences rather than on direct retrieval-or-recognition. The 4x token-cost premium reflects the additional compute the model uses for the reasoning trace, with the trace producing the capability gains that the benchmarks measure.

The cost framing matters because the 4x premium is large enough to require explicit justification in any production-deployment context. A vendor or operator deploying o1 in a context where GPT-4o would have produced an acceptable result is paying 4x more for the same operational outcome. The deployment math only works in contexts where the additional capability actually translates into proportionally-greater value capture.

Where the math works: travel-class disruption management

The first sector where the o1 premium earns itself back is travel-class disruption management. The agentic-rebooking workflow discussed elsewhere has the right shape for the reasoning-model premium: the task is multi-step, the constraints are bounded but complex, the failure-cost of producing a wrong answer is high (the customer's rebooking is wrong, the airline's customer-service-recovery cost is meaningful, the regulatory-class exposure for misrepresentation is real), and the per-task value capture is substantial because each correctly-handled disruption saves the operator real dollars in compensation, customer-service-time, and customer-trust impact.

The math runs roughly as follows. The cost of a 4x premium on the reasoning model for a disruption-rebooking workflow is on the order of single-digit dollars per task. The avoided-cost of a correctly-handled disruption versus a wrong-handled disruption runs into low-hundreds of dollars per case (compensation, recovery, customer-service time). The math supports the premium with substantial margin, and the operator deploying reasoning models in this category produces meaningful ROI.

The deployment data through 2024-2025 has supported this read. The major airlines and TMC operators that deployed o1-class reasoning models for disruption-management workflows have reported operational improvements that justify the premium. The vendor-class building products in this category has shifted toward reasoning-model integration as the default architecture.

Where the math works: healthcare-class clinical-decision-support

The second sector where the math works is healthcare-class clinical-decision-support. The clinical-evidence-review workflow that supports prior-authorization, treatment-planning, and broader clinical-judgment work has the right shape: the task requires multi-step reasoning across the clinical evidence, the failure cost of producing a wrong answer is substantial (patient-care impact, regulatory-class exposure, malpractice-risk), and the per-task value capture is meaningful because correct clinical-judgment-support saves the clinician time and improves the decision quality.

The math runs similarly to the travel case. The cost of the 4x premium on a reasoning model for a clinical-decision-support workflow is on the order of single-digit dollars per task. The avoided-cost of correct versus wrong clinical-judgment-support runs into low-thousands of dollars per case in some categories (avoided treatment-failure, avoided unnecessary-procedure, avoided regulatory-or-malpractice exposure). The math supports the premium with substantial margin, and the operator-grade deploying reasoning models in clinical-decision-support produces meaningful ROI when the deployment infrastructure is correctly built.

The deployment data through 2024-2025 in this category has been more variable than the travel-disruption category, with the variance being driven by the deployment-quality-and-clinical-integration work rather than by the underlying capability question. Where the deployment is done well, the math works. Where the deployment is done poorly, the math does not work because the wrong-answer rate is high enough that the premium does not produce proportional value.

Where the math does not work

For the broader category of agent-and-AI deployments, the 4x reasoning-model premium often does not earn itself back. Most consumer-facing chatbot deployments, most simple-query-and-response workflows, most retrieval-augmented-generation use cases, most general-purpose assistant deployments produce per-task value-capture that does not justify the 4x premium against the GPT-4o-class alternative.

The pattern is recognizable across the broader category. The reasoning-model deployment makes sense when the task structure is multi-step, the failure-cost is high, and the per-task value-capture is meaningful. The reasoning-model deployment does not make sense when any of those conditions does not hold. The default deployment for most operator production work continues to be the GPT-4o-class tier (or its successors), with the reasoning-model deployment being reserved for the specific use cases where the math works.

The vendor-class temptation is to deploy reasoning models everywhere because the capability gains are visible and the marketing positioning supports it. The part that holds is to evaluate each specific use case against the cost-and-value framework and to deploy reasoning models only where the framework supports it.

What this leaves the operator class with

For founders building AI products in 2025-2026, the practical advice is to evaluate the reasoning-model premium against the specific use case rather than against a general capability framing. The premium earns itself back in selected categories with substantial margin. It does not earn itself back in the broader category, where the GPT-4o-class deployment continues to be the appropriate default.

For investors evaluating AI products, the read is that the deployment-architecture choice (reasoning model versus the cheaper alternative) is a substantive operational dimension that the diligence should attend to. Companies that have built around reasoning-model deployment for use cases that do not justify the premium are running unit economics that do not support the deployment scale they have committed to.

The o1 release introduced slow thinking. The operator question is where slow thinking earns its 4x premium. The two sectors above are where the math works. The broader category is where it does not. Build the deployment architecture against the math, not against the capability headline. The category will continue to produce reasoning-model alternatives at progressively cheaper price points; the architecture choice will continue to be the operator-tier question that determines whether the deployment ROI works.

—TJ