Pick a calendar. Defend a budget. AI 2027 or Normal Tech.

TL;DR [show]

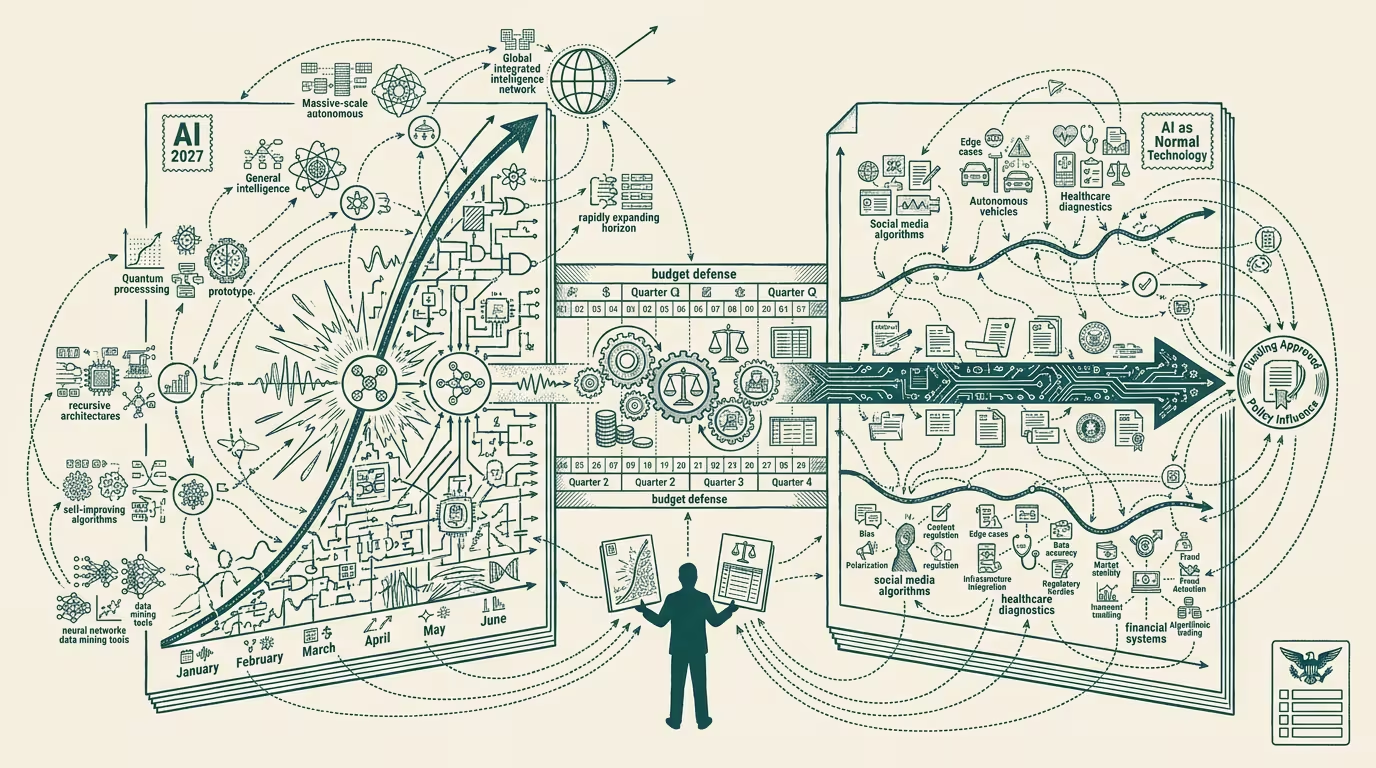

April 2025 produced two reference documents on opposite sides of the AGI-timeline question: Kokotajlo et al.'s 'AI 2027' (month-by-month intelligence-explosion scenario) and Narayanan/Kapoor's 'AI as Normal Technology' (trickle-not-tsunami, focus on existing harms). Pick a calendar, defend a budget; the budget defense is the actual artifact your board needs, and the source you cite is the political signal you send.

April 2025 produced two reference documents on opposite sides of the AGI-timeline question, and both deserve serious treatment because every operator-class AI plan written in the next eighteen months will, explicitly or implicitly, be calibrated against one of them.

This piece treats both documents on their own terms, in detail, before getting to the operator's question, which is what a budget written against either one actually looks like.

AI 2027 — what the scenario actually says

Daniel Kokotajlo, Scott Alexander, Eli Lifland, Thomas Larsen, and Romeo Dean published "AI 2027" in early April 2025. The document is a 71-page month-by-month scenario projecting an intelligence explosion arriving in late 2027. The scenario is detailed enough to be falsifiable. It names specific capabilities at specific months. It includes two endings — an "acceleration" ending where the system rolls past human-level coordination capacity, and a "treaty" ending where the U.S. and China negotiate an arms-control framework that contains the explosion.

Kokotajlo's prior work matters here. His 2021 essay "What 2026 Looks Like" forecast specific AI-capability milestones with a hit rate that, by 2024, was substantially above the median forecaster. The AI 2027 document carries forward that forecasting credibility. The trade press read the document as a serious projection rather than a thought experiment.

The scenario's load-bearing claim is that intelligence explosion arrives via recursive AI-improving-AI loops once the model passes a specific capability threshold. The threshold, in the document's framing, is the model's ability to do meaningful AI research itself. The threshold is named at roughly 2026-2027 capability levels. The post-threshold dynamics are the speculative part; the pre-threshold timeline is calibrated to current trend lines and specific compute commitments (Stargate, hyperscaler capex, chip-fabrication capacity).

The document's policy-class reception was substantial. Vitalik Buterin published a point-by-point response. National-security-class analysts cited it. The European AI-policy class read it as the U.S. mainstream-VC frame on the timeline. By Q2 2025 the document was the explicit reference point for any Beltway AI conversation about pacing.

AI as Normal Technology — what the counter-position actually says

Arvind Narayanan and Sayash Kapoor — both at Princeton, both authors of "AI Snake Oil" — published "AI as Normal Technology" through the Knight First Amendment Institute on April 15, 2025. The essay runs 30+ pages. The argument is that AI will be transformative like electricity and the internet were, but on a much longer adoption curve, with diffusion happening at the speed humans and institutions can absorb rather than at the speed the underlying capability advances.

The load-bearing claim is that "superintelligence" is incoherent as a frame. The mechanisms by which intelligence translates to real-world capability — economic deployment, institutional integration, regulatory accommodation, social-trust-formation — are slow and don't scale on the model-capability curve. The "intelligence explosion" thesis assumes the bottleneck is intelligence; Narayanan and Kapoor argue the bottleneck is everything downstream of intelligence, and everything downstream of intelligence runs on human-and-institutional clocks.

The essay's specific predictions are calibrated to historical adoption-curve precedents. Electricity took ~50 years to reach 50% penetration in U.S. industry. The internet took ~15 years to reach 50% penetration in U.S. households. AI will, on the Narayanan/Kapoor framing, take 10-25 years for full diffusion at the institutional level, with most of the meaningful capability arriving as ordinary technology improvement rather than as paradigm rupture.

The policy-class reception of "AI as Normal Technology" was warmer in academic and slow-policy circles, cooler in the VC and frontier-lab circles. The framing favors policy interventions targeted at existing harms (algorithmic bias, deployment failures, labor displacement) over policy interventions targeted at speculative future capabilities. Operators reading both documents typically prefer the framing that aligns with the regulatory regime they want to operate under, which is, of course, the political signal the citation choice sends.

What the structural read does with two opposite documents

Both documents are well-formed. Both are defensible on the evidence each one selects. They are not, in operator-class terms, equally useful. The useful frame is to treat them as planning inputs against different scenarios, and to defend the budget against both.

A budget defended against AI 2027 looks like aggressive talent-pull, infrastructure-procurement-locked-in-now-at-favorable-terms, position-staking on agent-runtime architectures that survive a 2027 capability shift, and explicit hedging for the workforce-disruption window the scenario implies. The budget is large. The hiring is fast. The procurement is multi-year on terms that lock the favorable price before the scenario's compute-shortage hits. The dollar number is uncomfortable for most boards.

A budget defended against AI as Normal Technology looks like steady-state AI-deployment investment calibrated to current capability, focus on existing-harm mitigation in the regulated categories (healthcare, finance, employment), workflow-integration investment that compounds across capability cycles, and infrastructure choices that are durable across 10-15 year deployment timelines. The budget is moderate. The hiring is steady. The procurement is conservative on terms that preserve optionality. The dollar number fits comfortably within a typical IT-modernization envelope.

The two budgets do not produce the same operating company. The AI-2027-positioned company is bet-the-firm aggressive in 2025-2026 and either captures the scenario's upside or absorbs the loss when the scenario's timeline slips. The Normal-Technology-positioned company is steady-state and either compounds against the long-arc adoption curve or gets outflanked by the AI-2027-positioned competitor if the scenario lands.

The operator-grade question is which scenario the company is positioned for, and the operator discipline is to pick explicitly rather than to default to the median position. Most companies in 2025 are defaulting. Their plans read as if AGI is coming someday. The plans don't pick a calendar. The plans don't defend a budget against either timeline.

The political signal of citation

The source the operator cites in the budget defense is the political signal the budget sends. Citing AI 2027 in a board deck signals alignment with the frontier-lab-and-VC consensus and positions the company as aggressive on capability bets. Citing Narayanan/Kapoor signals alignment with the academic-and-regulatory consensus and positions the company as conservative on capability bets and aggressive on existing-harm mitigation. Citing both, with explicit treatment of both budgets, signals an operator who has done the work and is presenting a defensible plan against either scenario.

The third option is the one most operators should be running. Most are not. The reason is that the third option requires more analytical work than the first two, and most operating teams have not yet done the work.

What happens to the operator who refuses to pick

The operator who refuses to pick a calendar runs a budget defended against neither scenario. That operator's plan is, in operating terms, the median plan that the median competitor is also running. If AI 2027 is correct, the AI-2027-positioned competitor wins. If Normal Technology is correct, the Normal-Tech-positioned competitor wins. The unpositioned operator captures none of the upside on either curve.

Pick a calendar. Defend a budget. The choice itself is the operator-tier discipline that distinguishes the company that's planning from the company that's drifting. The two reference documents are, in 2025, the cleanest way to surface the choice. The operators who use them as planning artifacts win the next three years. The operators who read them as opposite political claims and pick neither are, in operating practice, the operators whose plan reads exactly the same as their competitor's plan.

The read that survives is that both documents will turn out to be partially right and partially wrong. The capability curve will exceed Normal Technology's projection on some axes and fall short of AI 2027's projection on others. The operator read is to plan against both calendars and let the actual evidence decide which budget gets executed against. Operators who pre-built the dual-plan optionality are the operators whose 2027 strategic position is the strongest. The rest are running on the median plan and competing for the median outcome.

—TJ