I trust eval results from companies that do not sell the model being evaluated. The Texas AG settlement is why that matters.

TL;DR [show]

Tight commentary on the trust hierarchy for AI eval results. Evals published by organizations that have no commercial stake in the model being evaluated are more trustworthy than evals published by the model vendor or by organizations the model vendor funds. The Texas AG healthcare-AI settlement made the consequence of vendor-eval-trust visible. Names the categories of eval-publishing organization and the trust-weight each one warrants.

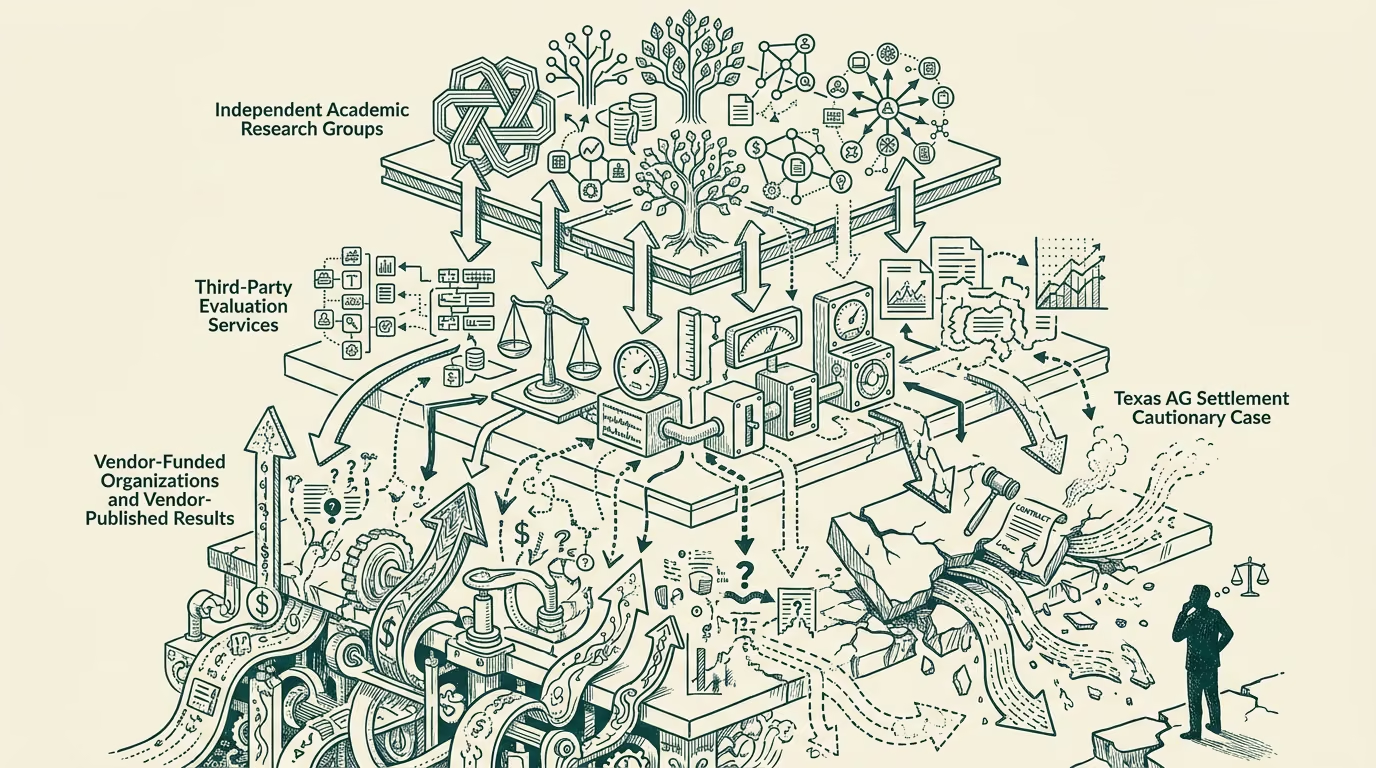

The trust hierarchy for AI evaluation results is straightforward in principle and undertaught in practice. Evaluations published by organizations with no commercial stake in the model being evaluated are systematically more trustworthy than evaluations published by the model vendor itself or by organizations the model vendor funds. The Texas AG healthcare-AI settlement made the consequence of trusting vendor-eval-results without independent verification publicly visible. The settlement is one example. The pattern generalizes.

Several categories of eval-publishing organization warrant different trust-weights, and the buyer-or-evaluator-class should calibrate accordingly.

Independent academic research groups operating at universities, with peer-reviewed publication of their methodology and results, sit at the top of the trust hierarchy. Examples include the various university-housed AI-safety-and-evaluation labs, the academic-class benchmark consortia that operate without commercial funding from the vendors whose models they evaluate, and the broader academic-research community that publishes evaluation work in peer-reviewed venues. The trust-weight is high because the academic-class incentive structure rewards methodological rigor and penalizes sloppy work over multi-year research-career horizons.

Independent third-party evaluation services that operate as paid services to the buyer-class, with explicit contractual independence from the vendor-class, sit just below the academic group. The trust-weight is high because the buyer-pays-for-the-service-not-the-outcome structure aligns the evaluator's incentives with rigorous evaluation. The category includes specialty-vendor evaluation services that healthcare, financial-services, and other regulated-industry buyers contract with for diligence-class evaluation work.

Government-or-regulator-funded evaluation infrastructure (NIST, the various sector-specific regulatory evaluation programs, the FDA's evaluation-related work, the European Commission's AI evaluation infrastructure) sits at a meaningful trust-weight because the funding source is structurally separated from the vendor commercial interest. The category produces evaluations on a slower timeline than the academic-or-third-party categories but with substantial methodological rigor.

Industry consortia and standards bodies (the various MLCommons-class organizations, the ISO-standard-bodies, the broader industry-and-academic mixed groups) sit at moderate trust-weight. The methodology is generally sound but the funding-and-governance structures sometimes include the vendor-class, with the consequence that the evaluation results can be calibrated against the vendor-class concerns in subtle ways. The category is useful but should be read with attention to the funding-and-governance details.

Vendor-published self-evaluation results sit at the bottom of the trust hierarchy. The vendor's incentive structure rewards results that support the vendor's commercial positioning, with the consequence that even rigorous internal evaluations are subject to selection-and-publication bias. The category is useful as a starting point for the buyer's diligence work but should never be the basis of the buyer's decision without independent verification.

Industry-sponsored research that runs through nominally-independent organizations but is funded by the vendor whose model is being evaluated sits below the vendor-published category. The trust-weight is the lowest because the appearance of independence is not matched by the actual structural independence, and the funding relationship distorts the evaluation in ways the published results may not surface.

The hierarchy generalizes across categories. Healthcare-AI buyers should weight academic-and-regulator-funded evaluation heavily, third-party-service evaluations next, industry-consortium evaluations carefully, vendor-self-evaluations as starting-points only, and industry-sponsored-nominally-independent evaluations with substantial skepticism. Other-vertical buyers running similar diligence work should apply the same hierarchy.

The Texas AG settlement was the public consequence of a buyer-class that had not adequately calibrated against this hierarchy. The next category-of-AI deployments will continue to produce similar settlements when the buyer-class skips the calibration. The diligence work is straightforward; the discipline to apply it is the part that varies. Apply it.

—TJ