The agent runtime will be the operating system, not the model alone.

TL;DR [show]

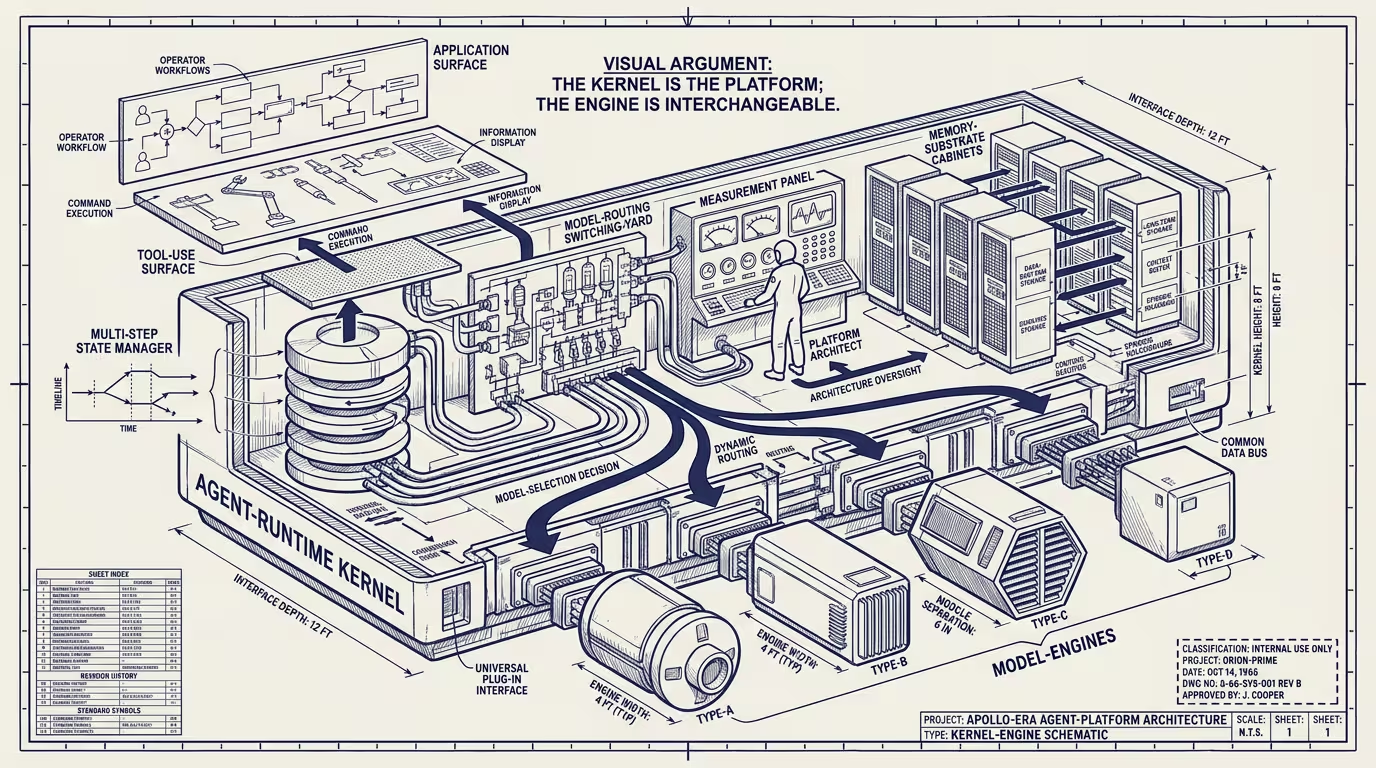

By late 2024 the question of which company wins the AI platform decade has been asked as if the foundation model is the platform. It is not. The model is the engine. The agent runtime is the kernel: the layer that decides which model is called when, manages the multi-step state, owns the tool-use surface, and persists the work across sessions. The operating-system analogy is closer than the analogy to a programming language or to cloud compute. Naming the runtime as the OS changes who the next platform-tier company looks like and what the operator should be building against.

I have been running agents in production for most of 2024. The platform conversation around them is wrong. The wrongness sits at the kernel boundary, and almost nobody in the conversation is naming it.

Here is the conversation I keep getting pulled into. Which lab wins. Which model has the best benchmark scores this quarter. Which company emerges as platform-tier by 2027. Every version of it treats the foundation model as the platform, exactly the way the 1990s personal-computer conversation treated the CPU as the platform. Intel made enormous money. Microsoft captured the platform rents. The CPU was the engine. The OS was the layer the application developer built against, the layer that decided which CPU instructions to issue, the layer the user actually experienced. Operators in 1993 who bet on the chip lost the next twenty years to operators who bet on the OS.

The agent runtime is in the OS position.

Let me be specific. The model-is-platform frame is sticky, and the operator who keeps building against the model API is going to spend the next two years paying for it.

What does the agent runtime actually do? It decides which model gets called for a given step. It manages state across calls in a single workflow. It owns the tool-use surface: which APIs the agent can hit, which authentication is in scope, which data the agent can read or write, what actions are allowed without a human in the loop. It persists the work across sessions, which is the part nobody is naming loudly enough. The persistence has to survive model deprecations, capability changes, and the routine churn of the underlying model layer. It is the layer the operator is building against. The model is downstream.

I keep ending up writing the same wrapper. First project: I rolled my own state machine, my own retry logic, my own persistence layer. Second project: I noticed it was the same shape. Third project: I started reading what the existing runtimes were doing, and every one of them was making the same five decisions. What survives a model deprecation. How much context to checkpoint between calls. How the agent recovers when a tool-use call fails halfway through. Where the long-running state actually lives. Who owns the audit trail. Most of them are making at least one of those five wrong. A couple are making three of them wrong. All of them are at least making the decisions visible, which is more than the model API does.

So I started picking the runtime that made the decisions least wrong. That is the move I want every operator reading this to make.

Calling this layer the operating system clarifies what the next platform-tier company looks like. It does not look like the company with the largest model. It looks like the company with the strongest abstractions over the model layer, plus the strongest persistence story, plus the cleanest tool-use surface, plus the most legible permission model. None of those are model-quality problems. All of them are infrastructure problems, in a register the model-as-platform frame consistently underweights.

Candidates, as of late 2024, are recognizable to anyone watching the agent layer. Foundation labs are shipping their own runtime alongside the model now. OpenAI's Operator preview. Anthropic's Computer Use API. Google's agentic Gemini features. Outside the labs: LangChain, which started as a wrapper and is increasingly an OS-shaped abstraction. CrewAI. AutoGen on the multi-agent side. Plus a long tail of platform-specific runtimes built into vertical-AI products. Each is making a bet about where the kernel boundary should be: how much orchestration logic lives in the runtime, versus inside the model, versus inside the operator's own application code. Every one of them is moving the line.

The interesting differentiation is not which one ships the most features. _It is which one settles the kernel-boundary question in a way operators can build against confidently for five years._ Most current candidates are still moving the boundary every quarter. That is the early-OS phase. Every release shifts what is supposed to live in the runtime versus what is supposed to live in the application. It looks chaotic. It looked exactly the same in 1993. Microsoft kept moving the line between Win32 and the application until DOS was dead, and the developers who built against the moving line in 1993 were the ones running platform-tier businesses by 2000. _That is where the agent layer is right now, and it is the most interesting place in the stack to be building._

Persistence deserves its own paragraph because it is the part of the runtime that is most under-built and most consequential. An agent doing real operator-tier work — a customer-service workflow, a code-review loop, a documentation pipeline — needs to maintain state across model calls, across model versions, across days of operation, and across the routine event of the underlying model getting deprecated and replaced. None of the current model APIs offer this natively. It has to live at the runtime layer. How the runtime chooses to implement persistence is the most consequential design decision in the agent stack right now. Get it right: you ship a five-year-stable abstraction. Get it wrong: your operators rewrite their agent code every model deprecation cycle.

Let me state the operator stakes bluntly. If you build against a runtime that fumbles persistence, you are signing up to rewrite your production agents twice a year. That is the risk profile most operators are accepting right now, because they are picking the runtime by feature checklist instead of by persistence architecture, and they will find out which runtimes fumbled when the first round of major model deprecations hits. I would rather lose a quarter learning the right runtime than lose a year recovering from the wrong one. So I am losing the quarter.

Platform rents accrue to the layer the operator's source code points at. An operator running production workflows on a runtime is not casually swapping runtimes; the cost of porting is the entire reason the runtime is acting as an OS. The model, at that layer, is an interchangeable engine. The runtime is what holds the operator in place.

Two confusions need naming because both happen in the same conversation.

The first is the one this piece is about: confusing the largest visible artifact with the platform-rent layer. The model is the largest visible artifact. The platform-rent layer is one floor up.

The second is confusing the application layer with the platform. The application is where the user-visible product runs. Chatbot, IDE assistant, customer-service portal. Platform rents do not accrue here either. The application is a customer of both the model and the runtime, paying rent to whichever has the strongest abstractions. As of late 2024, that is the runtime.

Five years out, the AI stack the next platform-tier company will dominate looks like an OS company, not a model company. Microsoft against Intel. Apple against the chip manufacturers. Android against the device OEMs. Excellent margins continue to accrue to the engine. Platform rents do not. They go to the layer that decides which engine fires when, and the layer that holds the operator's state, and the layer the operator's source code names as the dependency.

Operators choosing what to build against in 2024 should be choosing the runtime. Which runtime is the most interesting infrastructure decision in the stack right now, the candidates are not all going to win, and the kernel boundary will keep moving for a few more years before it settles. None of that is a reason to keep building against the model API. _The model API is assembly. The runtime is the language._

The OS sat one floor up from the engine. The agent runtime sits one floor up from the model. _Same floor._ The next platform-tier company is going to be standing on it. The floor is being poured right now, in late 2024, by a small number of teams that mostly do not yet know they are pouring concrete for the next platform decade. They will know in eighteen months. By then it will be too late to start. I am building against it now. I am picking which floor I want to stand on for the next five years. The wrong floor in late 2024 is a five-year tax. Pick a runtime. Pick it carefully. Then build.

—TJ