The imaging RCTs are unimplemented. The LLMs without evidence are everywhere.

TL;DR [show]

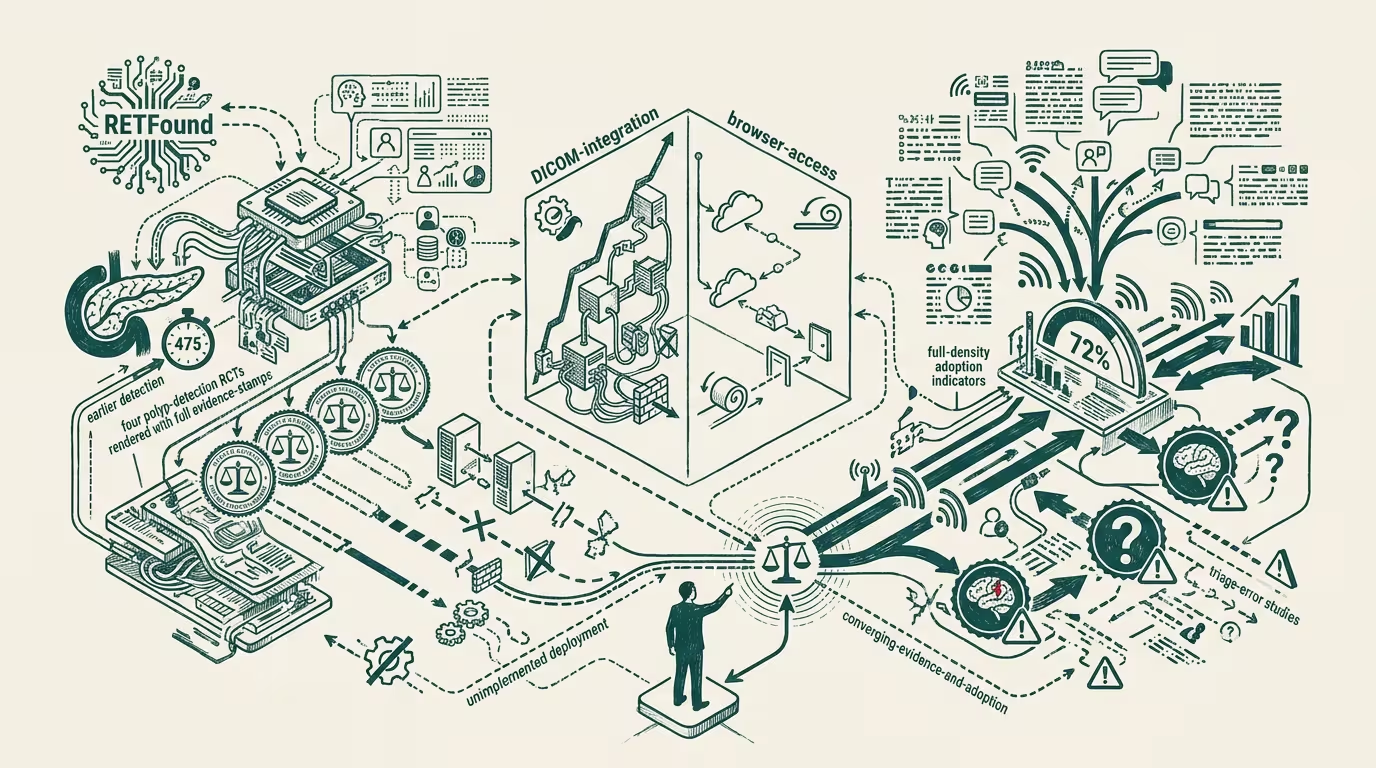

The healthcare-AI implementation paradox: imaging AI with strong RCT evidence (RETFound, pancreatic-cancer detection 475 days earlier than radiologists, four polyp-detection RCTs) sits unimplemented while LLM-based clinical decision support — with thin real-world evidence and documented triage-error studies — gets adopted by 72% of physicians. Operator read: deployment in healthcare diverges from what evidence supports; the operator playing the prioritization game right invests where evidence and adoption are converging, not where adoption is running ahead of evidence.

The healthcare-AI implementation paradox in 2026 is sharper than the trade press generally writes it. Imaging AI with strong randomized-controlled-trial evidence — RETFound for retinal disease detection, the pancreatic-cancer-detection algorithms showing 475-day-earlier detection vs. radiologist baseline, the four polyp-detection RCTs in colonoscopy that demonstrated mortality-class outcome improvements — sits largely unimplemented in clinical practice. LLM-based clinical decision support, with thin real-world evidence and documented triage-error studies, has been adopted by 72% of physicians per the 2025 AMA tracking survey.

_Deployment in healthcare diverges from what evidence supports._ Why, and what to do about it?

The argument that holds is that adoption velocity in healthcare is determined by deployment-friction factors rather than by evidence-strength factors. LLM-based clinical decision support is operationally low-friction — accessible through web browsers, requires no specialized hardware, integrates loosely with existing workflows, has free or low-cost tiers that don't require formal procurement. Imaging AI is operationally high-friction — requires DICOM-class integration with existing imaging infrastructure, requires regulatory-compliance review, requires clinical-workflow modification, requires capital procurement at hospital-class scale. The friction asymmetry produces the adoption asymmetry, independent of the evidence asymmetry.

Where should the operator-class invest? In the converging-evidence-and-adoption space, not the running-ahead-of-evidence space. The categories where evidence and adoption are converging in 2026 are the ambient-scribe category (strong evidence for documentation-time-savings, adoption running with evidence at major health systems), the radiology-workflow-AI category (decade-class regulatory clearance, mature adoption among the integrated radiology systems), and the diagnostic-decision-support category in narrowly-validated domains (retinal screening, certain dermatology applications). The categories where adoption is running ahead of evidence — general-LLM-CDS, AI-medical-scribing in unsupervised modes, AI-symptom-checker products — are operator-tier-risky because the evidence-catch-up event will reprice the deployment. Operators investing in the converging-category get the safer return profile.

Where's the largest available value-creation opportunity? In the imaging-AI implementation gap. The imaging-AI categories with RCT-grade evidence are not implemented at scale because the deployment friction blocks the implementation. The friction is operationally addressable — it's a workflow-redesign and procurement problem rather than an evidence problem. Operators who close the deployment gap (through specialized integration platforms, through white-label DICOM-pipeline tooling, through procurement-class acceleration vehicles) capture rents that the evidence-without-implementation gap creates. The opportunity is structurally larger than the opportunity in any LLM-class category because the evidence is stronger and the unmet-implementation gap is wider.

What's coming on the LLM-CDS side? An evidence-catch-up event within 18-36 months. When the documented triage-error studies mature into systematic-review evidence, the regulatory-class will engage with LLM-CDS deployment more aggressively, and the procurement-class will calibrate its purchasing decisions to the new evidence base. The adoption rate that ran ahead of evidence will, in operating terms, partially retreat or get reframed as advisory rather than decision-supporting. Operators with LLM-CDS deployments need to plan for the evidence-catch-up event in their 2026-2028 procurement and deployment plans. The plan that doesn't engage with the catch-up event is the plan that gets surprised by the regulatory-and-procurement repricing.

The same shape recurs across AI-in-regulated-categories generally. Finance-AI has its own version (LLM-class adoption running ahead of regulatory-validated evidence in fraud-detection and compliance categories). Education-AI has its own (LLM-tutoring adoption running ahead of learning-outcome evidence). Each category has the same operator question — invest in the converging space rather than the adoption-ahead-of-evidence space.

The healthcare-AI implementation paradox is, in operating terms, structurally durable through at least 2027-2028. The friction-asymmetry that drives the paradox is not addressable by AI-capability improvements; it requires operator-level work on deployment-pathway design. Operators who do that work capture the evidence-implementation-gap rents. Operators who don't are watching the LLM-CDS category absorb capital that, on the evidence layer, is mispriced.

What survives all of this is that the imaging-RCTs-unimplemented-while-LLMs-are-everywhere observation is one of the cleaner 2025-2026 articulations of the healthcare-AI implementation paradox, the operator strategy is to invest in the converging-evidence-and-adoption space and to plan for the LLM-CDS evidence-catch-up event, and the imaging-AI deployment gap is the largest available value-creation opportunity in the category. Operators who recognize the asymmetry and allocate against it explicitly are positioned for the evidence-and-adoption convergence cycle through 2027-2030. Operators who treat adoption rates as the primary capital-allocation signal are operating against the evidence layer and absorbing the catch-up cost when it lands.

The imaging RCTs are unimplemented. The LLMs without evidence are everywhere. The operator-tier playbook is to close the imaging gap and to plan for the LLM evidence-catch-up. Most operators are running neither of those plays. The ones that are are positioned for the convergence cycle the rest of the category will eventually have to run anyway.

—TJ