Ambient scribes are the one healthcare-AI category where evidence and adoption moved together.

TL;DR [show]

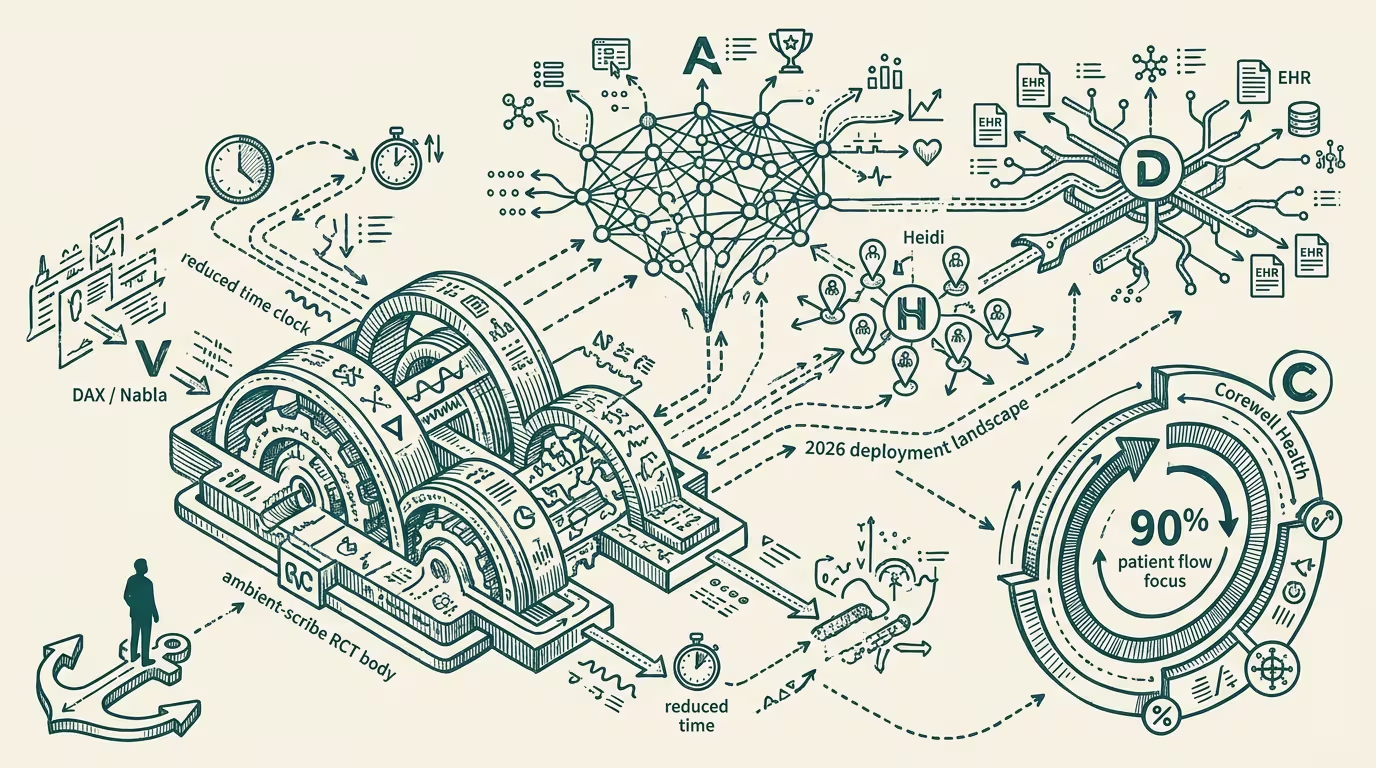

The ambient-scribe RCT body (multi-system trials with parallel arcs, comparing Microsoft DAX Copilot and Nabla against usual care) showed reduced documentation time and burnout. By 2026: Abridge wins 150+ health systems and 2025 Best in KLAS; Heidi dominates solo-practice; DAX retains broader EHR compatibility. Corewell Health: 90% of clinicians report improved patient focus. Operator read: this is the one healthcare-AI category where evidence and adoption moved together — opposite the Topol paradox. The category every operator should know cold; the one that teaches what the others are missing.

The ambient-scribe category is the one healthcare-AI category in 2024-2026 where evidence and adoption moved together. The randomized-controlled-trial body — multi-system trials with parallel arcs, comparing Microsoft DAX Copilot and Nabla against usual-care baselines — showed reduced documentation time, reduced clinician burnout, and improved patient-encounter focus. By 2026 the adoption metrics matched the evidence metrics. Abridge had won 150+ health systems and the 2025 Best in KLAS award. Heidi dominated solo-practice and small-group adoption. Microsoft DAX Copilot retained broader EHR compatibility. Corewell Health, one of the larger Abridge deployments, reported 90% of clinicians experiencing improved patient focus.

This is the operator-class case study every healthcare-AI operator should know cold. It is the category that teaches what the others are missing.

The argument that holds is in the convergence itself. The ambient-scribe category did not produce the implementation paradox that runs through other healthcare-AI categories: imaging RCTs that sit unimplemented while LLM-CDS gets adopted without evidence; cardiology RCT-grade screening tools sitting under-deployed while symptom-checker apps proliferate. It produced clean convergence — the evidence supported the adoption, the adoption matched the evidence, the procurement cycles moved cleanly through health-system deployment. The operator-grade question is why this category and not the others.

Why did the value proposition land cleanly with the deployment cohort? Because the value accrues to the clinician directly, in the form of less after-hours charting and lower documentation-class burnout. _The clinician is the buyer of the procurement decision and the beneficiary of the deployment._ The alignment is operationally clean. Other healthcare-AI categories produce benefits that accrue to the health system or the payer rather than to the clinician, which produces deployment friction at the clinician-adoption layer. The ambient-scribe category does not have that friction.

Why did the evidence accumulate fast? Because the RCT methodology is straightforward (measure documentation time, measure burnout-class outcomes, measure patient-encounter quality) and the trials were operationally feasible inside multi-site health-system networks. The methodology is well-suited to the deployment context. Other healthcare-AI categories — imaging-AI for early-stage disease detection, prevention-class interventions — have evidence-methodologies that are operationally harder to run, which produces longer evidence-cycles and the implementation-paradox dynamic.

Why did procurement move? Because health systems procure clinical-documentation tooling on regular cycles. The procurement-class workflow for ambient-scribe deployment maps onto the existing EHR-related procurement cycle without requiring new procurement-class infrastructure. Other healthcare-AI categories require novel procurement workflows — capital procurement for imaging-AI, new payer-relationship layers for prevention-class interventions — that introduce friction the ambient-scribe category did not face.

What does the convergence prove? It proves convergent-evidence-and-adoption is possible in healthcare-AI when the value-proposition-alignment, evidence-methodology-accessibility, and procurement-workflow-familiarity conditions are met simultaneously. Operators in adjacent categories who claim "healthcare-AI is structurally slow" should be challenged with the ambient-scribe counter-example. The category demonstrates that when the conditions hold, healthcare-AI deployment moves at venture-class velocity. The lesson for adjacent categories is to engineer for those conditions, not to accept the structural-slowness framing as a given.

The category-leader competitive dynamics are instructive in their own right. Abridge captured the large-health-system segment through aggressive sales-class execution and procurement-class velocity. Heidi captured the solo-practice and small-group segment through adoption-pricing and operationally-light deployment. Microsoft DAX Copilot retained the broad-EHR-compatibility segment through Microsoft's existing healthcare-vendor relationships and the M365-integration playbook. Each category-leader executed against a specific segment with specific operator capabilities. Operators in adjacent healthcare-AI categories should be modeling the segment-specific category-leader strategy explicitly rather than defaulting to a "we are the [Abridge/Heidi/DAX] of [adjacent category]" framing.

The convergence is, in operating practice, the exception rather than the rule across healthcare-AI categories. The operator-level question is whether the conditions can be engineered in adjacent categories or whether they are structurally unavailable. The answer varies category by category. The discipline is to assess each adjacent category against the three conditions and deploy capital where the conditions are achievable. The thing that crosses pillars is that the convergent-evidence-and-adoption pattern recurs across other regulated AI-deployment categories where the three conditions are met — legal-AI document-review (attorney-buyer alignment, accessible evidence methodology, familiar procurement), finance-AI portfolio-summarization (advisor-buyer alignment, accessible methodology, familiar procurement). Each has its own ambient-scribe-equivalent: a specific sub-category where convergence happens cleanly and adoption matches evidence.

The read that survives is that the ambient-scribe category is the canonical 2024-2026 healthcare-AI convergence case, the structural conditions that produced the convergence are partially-replicable in adjacent categories, and the operator lesson is to engineer for the conditions rather than to accept the slowness-paradox framing as universal. Operators who study the case carefully and translate the conditions to adjacent categories are the operators positioned to produce the next convergence-class deployment. Operators who treat the case as exceptional rather than instructive are the operators who continue to absorb the slowness-paradox cost in their categories.

Ambient scribes are the one healthcare-AI category where evidence and adoption moved together. The category teaches what the others are missing. The operator-tier who studies it cold is the operator who builds the next convergence event. The operators who treat it as exceptional are the operators who keep building against the implementation paradox.

—TJ