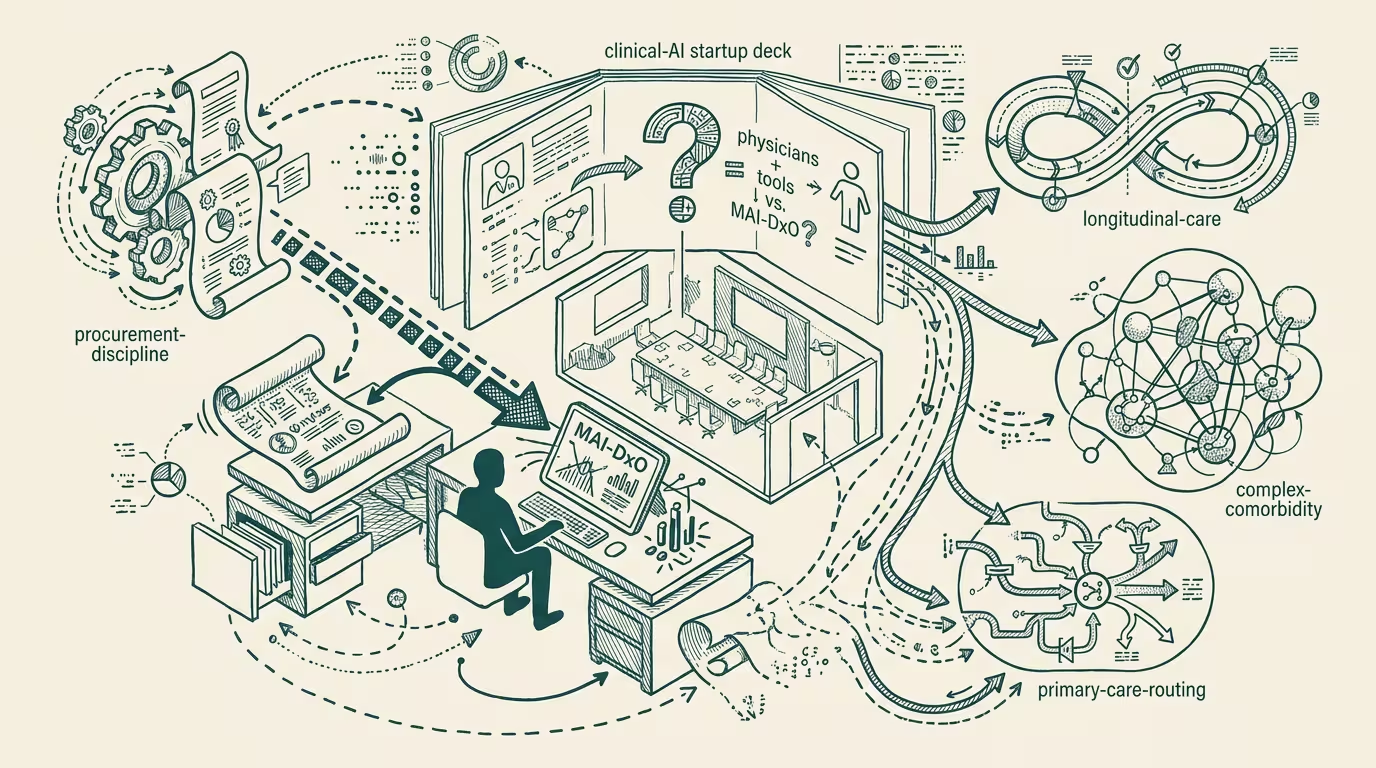

MAI-DxO is the procurement question now. Where do physicians + tools beat it?

TL;DR [show]

Microsoft AI's MAI-DxO research (2025-07-02) reset trade-press default framing from 'AI assists doctors' to 'AI outperforms doctors.' Methodology critiques didn't travel. Operator read: clinical-AI procurement now reads against the headline, not the methodology; the deck that doesn't position 'where physicians + tools beat MAI-DxO' is already losing to the deck that does.

A clinical-AI startup walks into a procurement meeting at a 200-bed regional health system in Q4 2025. The CIO opens a tab on her browser; the MAI-DxO headline number from Microsoft AI's July 2025 preprint is on the chief medical officer's desk; the methodology-critiques-that-didn't-travel are nowhere in the room. The startup has roughly three minutes to position its product before the procurement audience defaults to the headline framing as the operating reference. Most decks lose those three minutes. This piece is about why, and what the deck that wins says instead.

The structural question for every clinical-AI operator in 2025-2026 is therefore the question every procurement-deck has to answer: where do physicians plus tools beat MAI-DxO? The answer is not the methodology critique (NEJM cases unrepresentative of primary care, no human reference access during the test). The audience does not engage with methodology arguments in procurement conversations. The audience engages with configuration arguments — and the configuration argument is the load-bearing structural read.

The argument that holds is that "physicians + tools" describes the actual deployment configuration in 2025-2026: a clinician using clinical-AI as a decision-support layer, with the clinician retaining decision authority and the AI providing diagnostic, monitoring, or treatment-suggestion support. _MAI-DxO measured AI in isolation against physicians in isolation._ Neither configuration matches the actual deployment. The procurement-relevant question is whether the physician-plus-tools configuration produces better outcomes than either alone, and where in the clinical workflow the configuration's edge is largest.

The 2025-2026 evidence for physicians-plus-tools beating MAI-DxO converges on three category families. The first is complex multi-system clinical decisions where a patient presenting with multiple comorbidities, drug-drug interactions, and uncertain diagnostic boundaries presents a decision-space that single-pass AI handles worse than a physician integrating clinical context (history, stated preferences, social-determinant factors) alongside a tool surfacing differential-diagnosis possibilities and evidence base — internal medicine, hospitalist medicine, oncology procurement decks should position around this. The second is longitudinal patient management where care extends across months or years and the longitudinal context is operationally hard to encode in single-encounter tests; the physician carries patient context across encounters and the tool surfaces trend signals and deviation alerts, with primary-care AI, chronic-disease management AI, and post-acute-care AI as the natural procurement targets. The third is clinical-judgment-load high-stakes decisions — end-of-life care, complex surgical planning, treatment-resistant mental-health categories, palliative-care decision points — where the patient-specific judgment-load dominates the decision and physicians-plus-tools configurations capture AI's evidence-surfacing benefit while preserving clinician judgment capacity.

The procurement-deck-discipline that follows is to name the comparison condition explicitly. The deck saying "our system outperforms human physicians" loses to MAI-DxO on the headline framing because MAI-DxO's headline is bigger. The deck saying "our system, deployed as physician-plus-tools, outperforms physician-alone in [named category]" wins because the comparison is operationally meaningful and the configuration is what hospitals actually deploy. The naming is, in operating terms, the procurement-deck-discipline that the category requires. Operators arguing the MAI-DxO methodology in those same conversations are operating-incoherent — the methodology argument doesn't travel, the audience doesn't engage with it, and the configuration argument is the one that's procurement-coherent and load-bearing.

That discipline is durable across benchmark cycles. MAI-DxO is the 2025-2026 reference; the 2026-2027 reference will be a different benchmark with the same headline-vs-methodology asymmetry. Operators build the configuration-argument discipline once and rerun it against each new benchmark. The headline-vs-configuration asymmetry recurs across AI-in-regulated-categories generally — finance-AI and legal-AI have their own MAI-DxO-class events on parallel timelines, and the operator-class who built the configuration-argument discipline in clinical-AI in 2026 will run the same play in those adjacent categories when the benchmark cycle hits them.

The read that survives is that MAI-DxO is the cleaner reference point for a structural shift in clinical-AI procurement, the methodology critique is operating-correct and procurement-irrelevant, and the operator-tier discipline is to position the deck around the physician-plus-tools configuration in the three categories where the configuration's edge is largest. By 2027 the procurement-class will have absorbed the configuration-argument discipline as standard. In 2026 it is competitive advantage for operators implementing it now.

MAI-DxO is the procurement question. The answer is configuration-naming in the three categories where physicians plus tools beat the headline. Operators with the answer win the procurement conversation. Operators arguing the methodology lose it.

—TJ