MAI-DxO reset the room. Position your deck against it.

TL;DR [show]

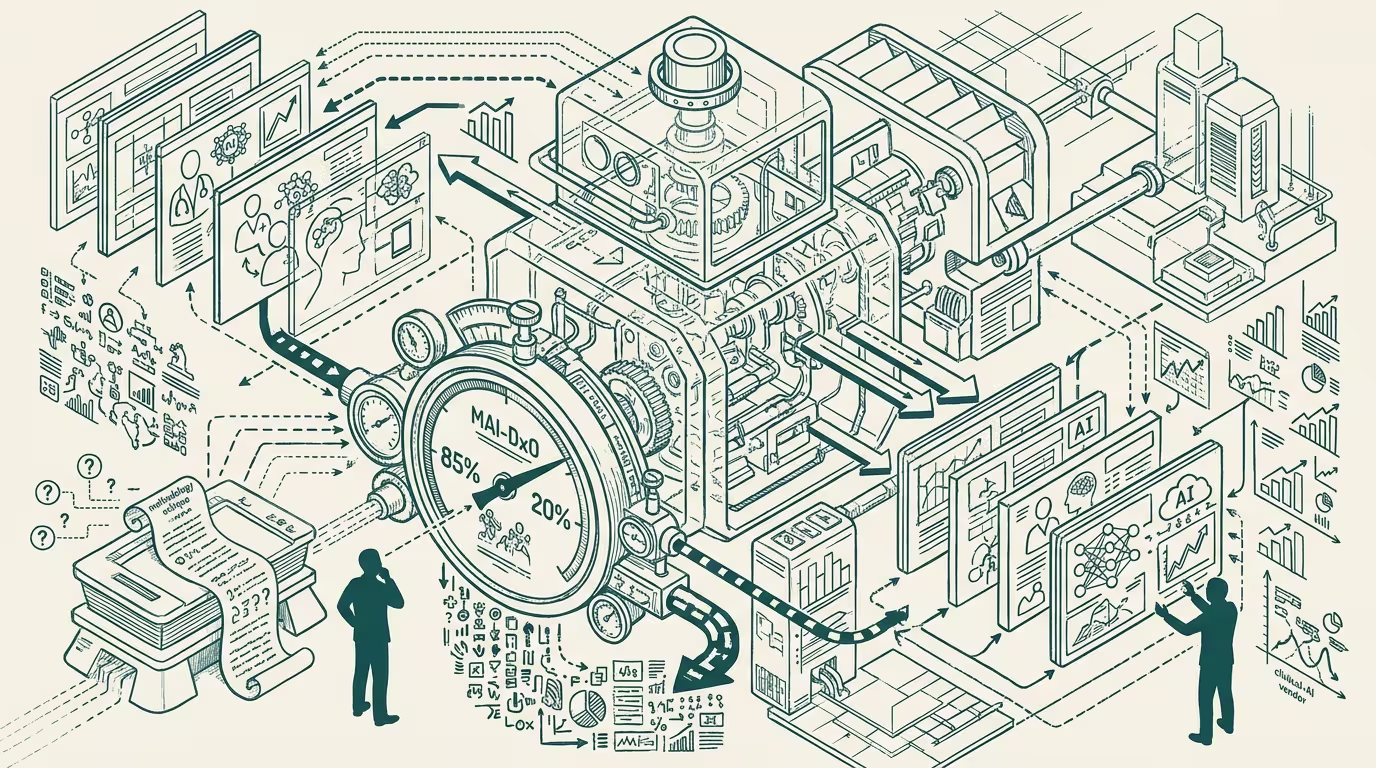

Microsoft AI's MAI-DxO research preprint (2025-07-02) reported 85% diagnostic accuracy vs. 20% for physicians on 304 NEJM cases — and the methodology critiques (no human reference access, NEJM cases unrepresentative of primary care) didn't travel. Operator read: 'AI assists doctors' is now the second-most-credible framing in the room; the first is 'AI outperforms doctors.' Position your deck against the headline, not the methodology.

Microsoft AI published the MAI-DxO research preprint on July 2, 2025. The reported result was 85% diagnostic accuracy on 304 New England Journal of Medicine cases, against 20% for physicians given the same cases. The trade press wrote it up as "AI outperforms doctors 4x." The methodology critiques — physicians had no access to references, NEJM cases are selected for difficulty and not representative of primary care, the test bed favored systematic-reasoning tasks over the messy presentation patterns of primary care — did not travel.

Two readings, two outcomes. The methodology-correct reading says: the result is artifact-of-test-conditions. The procurement-relevant reading says: it doesn't matter, the headline travels and the methodology critique does not. Both readings are correct, and only one of them wins the room.

The critiques do not travel. The headline travels.

That is the structural shift. Through 2024, "AI assists doctors" was the most-credible framing in healthcare-AI procurement conversations. The deck that opened with "AI assists doctors, here's how" was the deck that landed. Through Q3 2025, _the most-credible framing is "AI outperforms doctors, here is how the operator deploys it"_, with "AI assists doctors" demoted to the second-most-credible framing. The MAI-DxO preprint is the inflection event.

What carries the weight of the shift is the audience composition. Procurement-class buyers are not methodologists. They read the headline. They engage with the implications. They do not, in operating practice, read the supplementary methodology section. The deck that positions against the methodology critique loses the room because the procurement audience does not have the methodology critique loaded as context.

Two decks, two outcomes. The clinical-AI startup whose deck opens with "the MAI-DxO methodology has problems" is the startup that loses the procurement conversation in the first three minutes. The startup whose deck opens with "MAI-DxO is the calibration point and here is how our product extends or differs from it" is the startup that captures the conversation. The methodology critique is the academic-class engagement; the operator-class engagement is the headline-positioning. Both are correct epistemically. Only the second one wins procurement.

What about the second-most-credible framing? It works as fallback, not lead. "AI assists doctors" still works in deployment contexts where the AI is provably bounded (specific clinical-decision-support scenarios, narrow specialty applications, regulatory-validated diagnostic categories). The framing fails in the procurement room because the procurement audience now has the MAI-DxO headline as the reference point. The fallback role is operating-coherent: the deck that opens with the outperform framing and falls back to the assist framing in deployment specifics is the deck that survives the procurement conversation and the deployment conversation. The deck that opens with the assist framing and never gets to the outperform framing is the deck that loses the procurement conversation entirely.

What about the methodology critique itself? It's operating-relevant in deployment, not in procurement. Once the procurement conversation is won, the deployment conversation has to engage with the methodology realities. NEJM cases are not primary-care-representative. The clinical-decision-support deployment will encounter messy primary-care presentations that the MAI-DxO test bed did not test. The deployment-class read of the methodology critique is operating-relevant. The procurement-class read is not. Operators have to switch register between the two conversations. Most clinical-AI startups are running one register across both conversations and losing one of the two.

The same shape recurs across AI-in-regulated-category deployments. Finance-AI has its own MAI-DxO-class events (the headline performance number that travels and the methodology critiques that do not). Government-AI has its own (the federal-AI-deployment success metric that travels and the contextual constraints that do not). Each category has the same operator-tier discipline — position against the headline in procurement, against the methodology in deployment.

What survives all of this is that MAI-DxO is one of the cleaner inflection events in healthcare-AI procurement, the methodology critiques are operating-correct and procurement-irrelevant, and the operator discipline is to use the headline as the entry point for procurement conversations through at least 2026. By 2027 the next benchmark event will displace MAI-DxO as the reference point, and the same discipline will apply to whichever benchmark replaces it.

The trade press will, of course, continue to alternate between "AI outperforms doctors" coverage and "AI methodology is flawed" coverage. The part that holds is that both are partially correct and that the procurement-class audience has access to the first and not the second. Position the deck accordingly.

MAI-DxO reset the room. The room's reference point is now the outperform framing. The deck that does not position against it is the deck that loses to the deck that does. The methodology critique is, in procurement terms, not an asset. It is a footnote that the procurement audience will not read.

—TJ