The MCP gambit, two years in: Claude Cowork is the product built on the bet.

TL;DR [show]

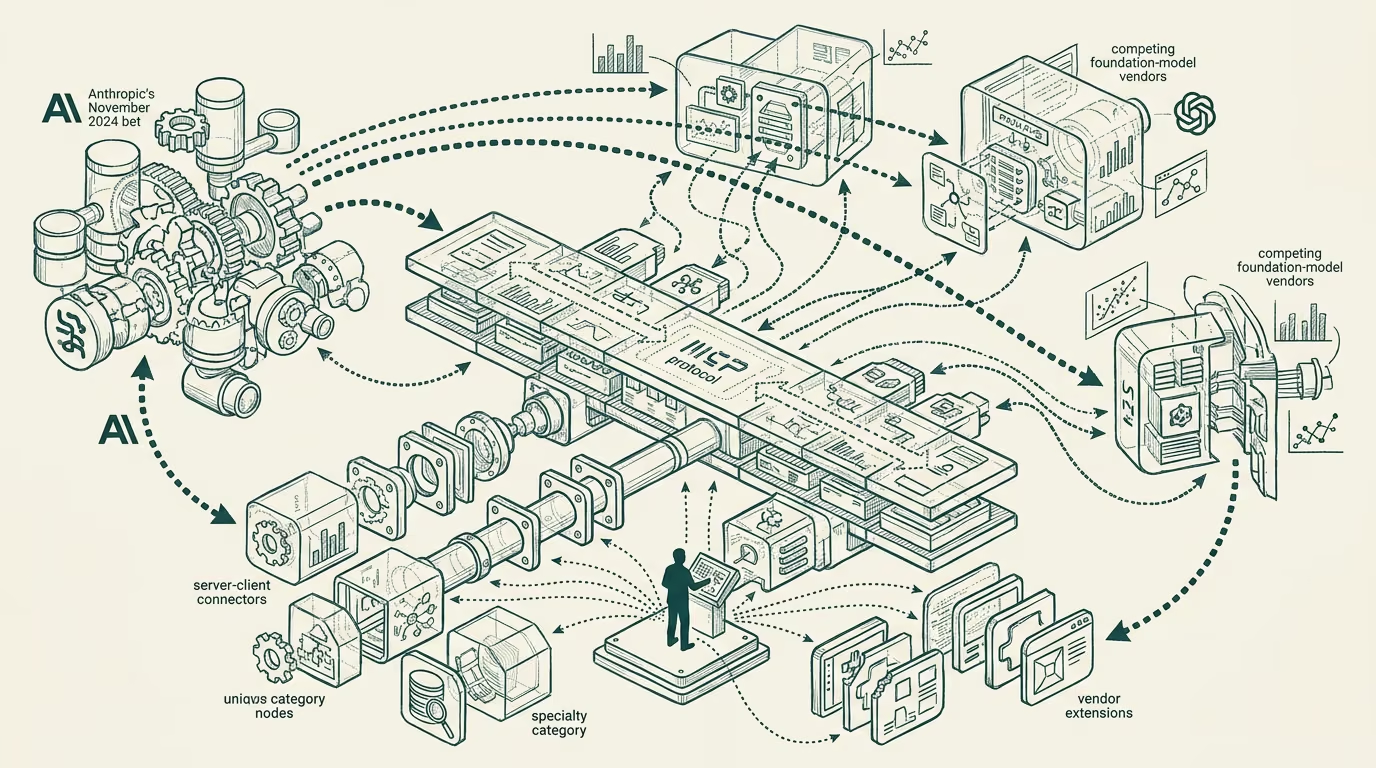

Two-year retrospective on Anthropic's Model Context Protocol release in late 2024 and what the protocol-bet has produced through 2025-2026. The protocol stuck. The competing standards from the other major foundation-model vendors absorbed substantial parts of MCP's design. The operator-grade MCP servers in production by Q3 2025 are concentrated in a small set of categories. Short essay walking what the bet looked like, what stuck, what shifted, and what the operator-class read on the next 12 months should be.

Anthropic's release of the Model Context Protocol in November 2024 was a deliberate platform-tier bet that the agentic-AI ecosystem needed an open protocol for connecting models to tools, data sources, and operational context, rather than a vendor-specific integration layer that each foundation-model provider would otherwise have built incompatibly. The bet was visible at the time as a bet because the protocol was open-source from the start, the reference implementations were genuinely useful, and the documentation-and-developer-experience work was substantial enough to demonstrate that the company was committing real engineering investment to the protocol's adoption.

Two years on, the protocol has substantially stuck. The MCP server-and-client ecosystem has grown substantially through 2025, with a meaningful population of MCP servers covering specialty categories (file system, code execution, database queries, the broader category of integration-tier work that agentic-AI deployments require). The operator-class developer community has been adopting the protocol as the integration standard rather than the per-vendor proprietary integration approach that the alternative would have produced.

The competing standards from the other major foundation-model vendors absorbed substantial parts of MCP's design. The protocol that emerged at the broader category level is recognizably MCP-shaped, with the various vendor-specific extensions adapting to the underlying MCP framing rather than to entirely-different framings. The protocol-tier interoperability that the Anthropic bet was meant to produce has, in operational terms, mostly arrived.

The operator-grade MCP servers in production by Q3 2025 are concentrated in a small set of categories. The integration-tier servers (database, file system, version-control) are the most mature and widely-deployed. The specialty-vertical servers (medical-data, financial-data, legal-research) are present but variable in quality. The enterprise-integration servers (Salesforce, ServiceNow, SAP, the broader category of enterprise-data-source integration) are still maturing, with the deployment work being substantial and the operator-level population using them growing through 2025-2026.

Claude Cowork, the product positioning that has emerged at Anthropic to capture the value-creation flowing through the MCP-protocol-shaped ecosystem, is the visible commercial expression of the protocol bet. The product surface integrates the MCP-server-and-client capability with the broader Claude-tier offerings, producing a deployment-ready environment that operator buyers can adopt without doing the integration-and-deployment work themselves. The product positioning makes the protocol-tier bet operationally legible to the buyer-class.

For founders and operators reading the MCP ecosystem in 2026, the practical advice is to build for the protocol-shape that has emerged rather than for any single vendor's specific implementation. The protocol-tier interoperability is the default operating condition; products that depend on per-vendor proprietary integration are working against the durable shape of the ecosystem. The MCP server category has substantial green-field opportunity in the specialty-vertical and enterprise-integration tiers, where the operator-grade implementations are still maturing.

The bet worked. The protocol stuck. The competing vendors absorbed the design. The product-tier expressions are emerging. The operator-level building in this ecosystem should plan against the durable protocol-shape, not against vendor-specific integration patterns the protocol has substantially supplanted. The next 12-18 months will continue the maturation of the operator-grade MCP server ecosystem, with the specialty-vertical and enterprise-integration tiers being where the meaningful build-out concentrates.

The MCP gambit is no longer a gambit. It is the protocol layer the agentic-AI category runs on. The Anthropic bet paid out, the trade-press has not generally caught up to that read, and the operator building against the protocol is positioned for the next-cycle deployments the protocol enables.

—TJ