The agent-handoff failure mode Air Canada paid $650 to document publicly.

The 2024 Moffatt v Air Canada decision at the British Columbia Civil Resolution Tribunal awarded the customer roughly $650 against the airline. The substantive issue was that Air Canada's customer-facing chatbot had told the customer he was eligible for a retroactive bereavement-fare refund, which conflicted with the airline's actual published bereavement-fare policy. Air Canada argued the chatbot's representations were not binding on the airline. The tribunal disagreed. The decision is small in dollar terms and large in operator-class implications because it publicly documents a failure mode that runs through every multi-agent production deployment.

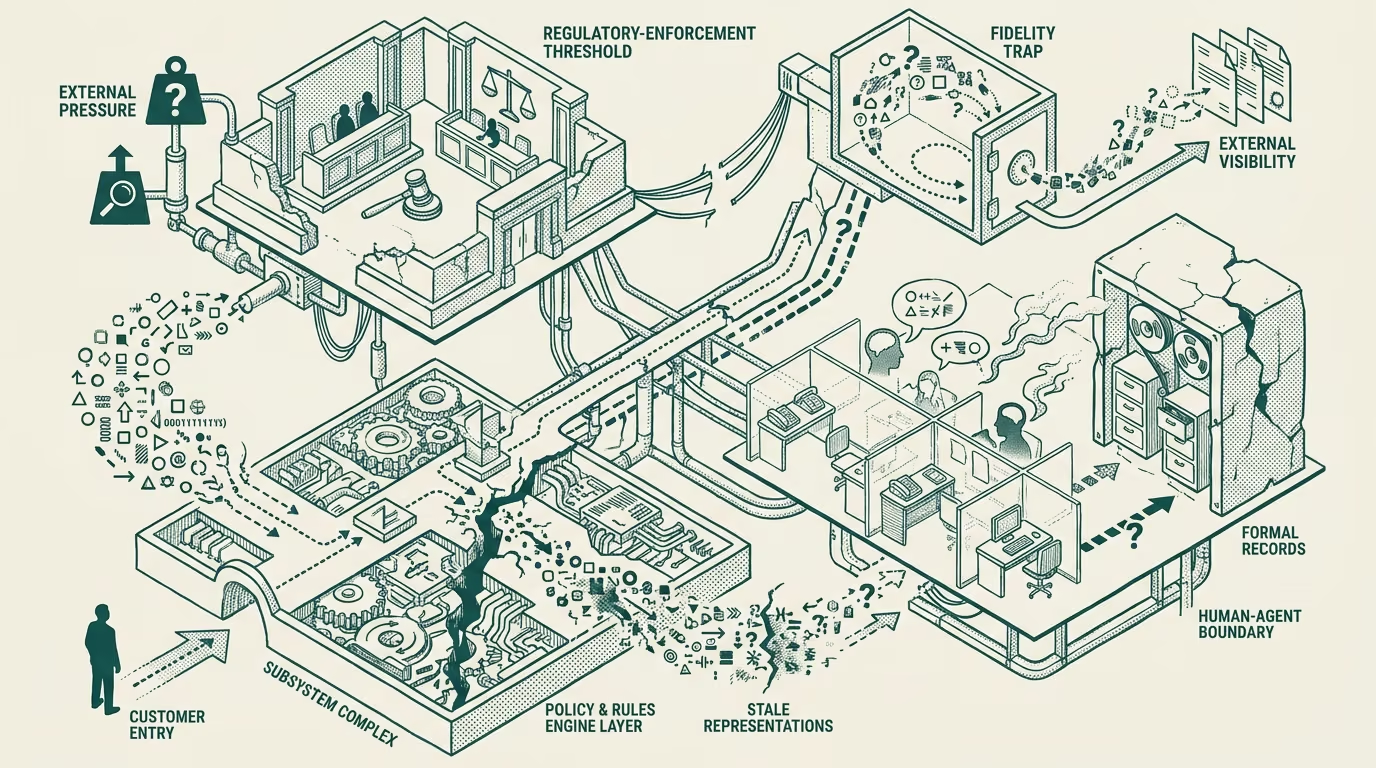

The failure mode is the agent-handoff seam. When agent-A handles part of a customer interaction and hands the task or the context to agent-B (or to a human), the context that flows across the handoff loses fidelity in ways that produce silent corruption. The customer sees one set of representations from agent-A; the airline's policy-and-record system has a different version; the corruption surfaces only when the customer or a regulatory framework forces it to surface. The Air Canada case forced it to surface in a small dollar amount that the operator-class should be reading as a much larger structural warning.

There are three places this failure mode bites in production multi-agent systems.

The first is at the policy-and-rules-engine seam. The customer-facing agent reads the policy from a representation layer that is not the canonical-policy source of truth. The representation layer can drift from the canonical source between updates, with the customer-facing agent producing answers that the canonical layer would not produce. The Air Canada case is exactly this seam: the chatbot's representation of the bereavement policy was not the canonical policy.

The second is at the multi-agent-coordination seam. When a request is handed from a customer-service agent to a fulfillment agent to a billing agent, the context that flows between them includes the original request, the constraints established by the prior agents, and the commitments that have been made along the way. The handoff infrastructure that carries this context is the seam where commitments can be lost, modified, or misinterpreted. The failure mode here is that commitments made early in the chain do not get honored later, with the customer experiencing the gap.

The third is at the human-in-the-loop seam. When an AI agent escalates to a human (a human customer-service representative, a human supervisor, a human fulfillment specialist), the handoff includes the AI's interpretation of what has happened so far. The human reads the interpretation and acts on it. If the AI's interpretation is wrong, the human's action is wrong, and the resulting commitment to the customer is built on the wrong foundation. The customer sees consistent answers across the AI-and-human handoff that are jointly wrong.

The Air Canada case publicly documented the first seam. The other two seams produce comparable failure modes that are mostly being absorbed inside the deploying organizations rather than surfacing in regulatory or tribunal contexts. The deploying organizations are paying for the failure modes through customer-service-recovery cost, reputational impact, and occasional escalation to settlement-class outcomes the public does not see.

What to do about it. The operator-tier deployment of multi-agent systems should treat the handoff seams as first-class engineering problems. The policy-and-rules seam needs canonical-source enforcement with regular sync-validation against the representation layer the customer-facing agent uses. The multi-agent-coordination seam needs explicit commitment-tracking infrastructure that survives the handoff. The human-in-the-loop seam needs the AI's interpretation to be presented to the human as the AI's interpretation rather than as ground-truth.

These are not exotic engineering requirements. They are the kind of work that mature multi-agent deployments do as a matter of routine. The deployments that have not done it are running the Air Canada failure mode invisibly, with the eventual cost being some combination of regulatory exposure, reputational damage, and customer-tier liability the deploying organization absorbs without naming. The $650 is the public version. The private version is larger and more expensive, and the operator running multi-agent deployments without addressing the seams is paying it whether they recognize it or not.

—TJ